Posts tagged LLMs

Best LLMs for RAG: Top Open And Closed Source Models

Top Open And Closed Source LLMs For Short, Medium and Long Context RAG

Survey of Hallucinations in Multimodal Models

An exploration of type of hallucinations in multimodal models and ways to mitigate them.

5 Techniques for Detecting LLM Hallucinations

A survey of hallucination detection techniques

Addressing GenAI Evaluation Challenges: Cost & Accuracy

Learn to do robust evaluation and beat the current SoTA approaches

Galileo & Google Cloud: Evaluating GenAI Applications

Galileo on Google Cloud accelerates evaluating and observing generative AI applications.

Galileo Luna: Advancing LLM Evaluation Beyond GPT-3.5

Research backed evaluation foundation models for enterprise scale

RAG LLM Prompting Techniques to Reduce Hallucinations

Dive into our blog for advanced strategies like ThoT, CoN, and CoVe to minimize hallucinations in RAG applications. Explore emotional prompts and ExpertPrompting to enhance LLM performance. Stay ahead in the dynamic RAG landscape with reliable insights for precise language models. Read now for a deep dive into refining LLMs.

Mastering RAG: 8 Scenarios To Evaluate Before Going To Production

Learn how to Master RAG. Delve deep into 8 scenarios that are essential for testing before going to production.

A Metrics-First Approach to LLM Evaluation

Learn about different types of LLM evaluation metrics needed for generative applications

Webinar: Mitigating LLM Hallucinations with Deeplearning.ai

Join in on this workshop where we will showcase some powerful metrics to evaluate the quality of the inputs (data quality, RAG context quality, etc) and outputs (hallucinations) with a focus on both RAG and fine-tuning use cases.

Mastering Agents: Build And Evaluate A Deep Research Agent with o3 and 4o

A step-by-step guide for evaluating smart agents

Pinecone + Galileo = get the right context for your prompts

Galileo LLM Studio enables Pineonce users to identify and visualize the right context to add powered by evaluation metrics such as the hallucination score, so you can power your LLM apps with the right context while engineering your prompts, or for your LLMs in production

Optimizing LLM Performance: RAG vs. Fine-Tuning

A comprehensive guide to retrieval-augmented generation (RAG), fine-tuning, and their combined strategies in Large Language Models (LLMs).

Generative AI and LLM Insights: February 2024

February's AI roundup: Pinterest's ML evolution, NeurIPS 2023 insights, understanding LLM self-attention, cost-effective multi-model alternatives, essential LLM courses, and a safety-focused open dataset catalog. Stay informed in the world of Gen AI!

Introducing Our Agent Leaderboard on Hugging Face

We built this leaderboard to answer one simple question: "How do AI agents perform in real-world agentic scenarios?"

Metrics for Evaluating LLM Chatbot Agents - Part 2

A comprehensive guide to metrics for GenAI chatbot agents

Metrics for Evaluating LLM Chatbot Agents - Part 1

A comprehensive guide to metrics for GenAI chatbot agents

Mastering Agents: Evaluating AI Agents

Top research benchmarks for evaluating agent performance for planning, tool calling and persuasion.

Tricks to Improve LLM-as-a-Judge

Unlock the potential of LLM Judges with fundamental techniques

5 Key Takeaways from Biden's AI Executive Order

Explore the transformative impact of President Biden's Executive Order on AI, focusing on safety, privacy, and innovation. Discover key takeaways, including the need for robust Red-teaming processes, transparent safety test sharing, and privacy-preserving techniques.

Measuring What Matters: A CTO’s Guide to LLM Chatbot Performance

Learn to bridge the gap between AI capabilities and business outcomes

State of AI 2024: Business, Investment & Regulation Insights

Industry report on how generative AI is transforming the world.

Introducing the Hallucination Index

The Hallucination Index provides a comprehensive evaluation of 11 leading LLMs' propensity to hallucinate during common generative AI tasks.

Introducing ChainPoll: Enhancing LLM Evaluation

ChainPoll: A High Efficacy Method for LLM Hallucination Detection. ChainPoll leverages Chaining and Polling or Ensembling to help teams better detect LLM hallucinations. Read more at rungalileo.io/blog/chainpoll.

Generative AI and LLM Insights: April 2024

Smaller LLMs can be better (if they have a good education), but if you’re trying to build AGI you better go big on infrastructure! Check out our roundup of the top generative AI and LLM articles for April 2024.

Mastering RAG: How To Architect An Enterprise RAG System

Explore the nuances of crafting an Enterprise RAG System in our blog, "Mastering RAG: Architecting Success." We break down key components to provide users with a solid starting point, fostering clarity and understanding among RAG builders.

Understanding LLM Hallucinations Across Generative Tasks

The creation of human-like text with Natural Language Generation (NLG) has improved recently because of advancements in Transformer-based language models. This has made the text produced by NLG helpful for creating summaries, generating dialogue, or transforming data into text. However, there is a problem: these deep learning systems sometimes make up or "hallucinate" text that was not intended, which can lead to worse performance and disappoint users in real-world situations.

Generative AI and LLM Insights: March 2024

Stay ahead of the AI curve! Our February roundup covers: Air Canada's AI woes, RAG failures, climate tech & AI, fine-tuning LLMs, and synthetic data generation. Don't miss out!

Mastering RAG: How To Observe Your RAG Post-Deployment

Learn to setup a robust observability solution for RAG in production

Mastering Data: Generate Synthetic Data for RAG in Just $10

Learn to create and filter synthetic data with ChainPoll for building evaluation and training dataset

Is Llama 3 better than GPT4?

Llama 3 insights from the leaderboards and experts

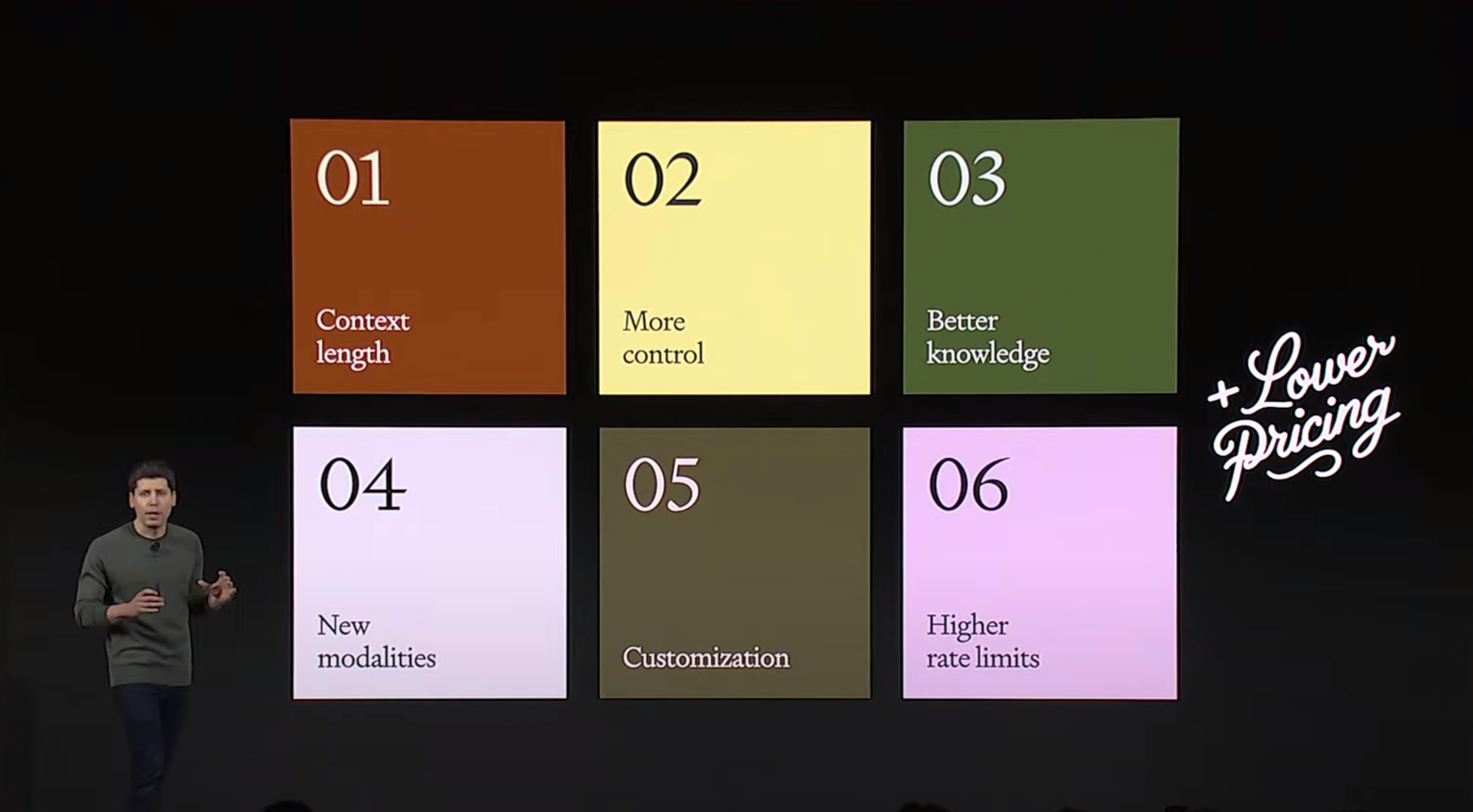

15 Key Takeaways From OpenAI Dev Day

Galileo's key takeaway's from the 2023 Open AI Dev Day, covering new product releases, upgrades, pricing changes and many more!

A Framework to Detect & Reduce LLM Hallucinations

Learn about how to identify and detect LLM hallucinations

Generative AI and LLM Insights: August 2024

While many teams have been building LLM applications for over a year now, there is still much to learn about RAG and all types of hallucinations. Check out our roundup of the top generative AI and LLM articles for August 2024.

Generative AI and LLM Insights: May 2024

The AI landscape is exploding in size, with some early winners emerging, but RAG reigns supreme for enterprise LLM systems. Check out our roundup of the top generative AI and LLM articles for May 2024.

Mastering RAG: Adaptive & Corrective Self RAFT

A technique to reduce hallucinations drastically in RAG with self reflection and finetuning

Mastering RAG: How To Evaluate LLMs For RAG

Learn the intricacies of evaluating LLMs for RAG - Datasets, Metrics & Benchmarks