From OWASP to Enterprise: Building a Central Control Plane for Agentic AI Security

As agentic AI moves from prototype to production, the biggest question has shifted. Every enterprise already knows it needs security controls. The real question is: who owns them, how are they enforced, and can they scale across hundreds of use cases simultaneously?

The Agentic AI Security Paradox

Every major financial institution is building agentic AI. Client preparation tools, autonomous copilots, consumer-facing assistants. The use cases are multiplying fast. But there's a paradox: the more agentic use cases an enterprise builds, the harder it becomes to secure them.

Traditional application security breaks down in the agentic world because agents make autonomous decisions, chain together tool calls, interact with databases, and process sensitive information, often across multiple steps where a single vulnerability can cascade into a full-blown breach.

This is where OWASP comes in. The OWASP Top 10 for Agentic Applications, alongside its companion 17-threat model, has quickly become the de facto security framework that enterprises are rallying around. But having a framework is one thing. Operationalizing it at enterprise scale is an entirely different challenge.

Notably, enterprises are increasingly treating agent-level controls as a distinct governance category, separate from LLM-level governance:

"Agentic AI requires separate control mechanisms, distinct from LLM-level controls."

— CISO office, global bank

Multiple enterprise procurement processes now explicitly name "agent control" as a standalone purchase category, distinct from observability or developer tooling.

This blog breaks down how large enterprises, particularly in financial services, are turning OWASP from a PDF document into enforceable, auditable, centrally governed controls for agentic AI.

Why OWASP? Why Now?

Multiple regulatory pressures are converging simultaneously. EU AI Act audits begin in August 2026, requiring demonstrable compliance. GDPR continues to impose strict data-handling requirements on any AI system that processes personal data. Internal governance mandates at large banks require security sign-off before any agentic use case is deployed to production. This is already happening:

One global bank's CISO team cited EU AI Act compliance as the explicit driver for a formal requirements document covering prompt monitoring, prompt blocking, real-time reporting, and policy configuration for their agentic AI systems.

OWASP has emerged as the preferred starting point because it is the most concrete and repeatable. While the EU AI Act is broad and has undergone countless revisions, OWASP provides specific threat scenarios, attack vectors, and remediation guidance that security teams can directly map to their agentic platforms.

OWASP is just the starting point, with much more to build on top. Enterprises are already asking: "If we can codify OWASP into enforceable controls, can we do the same for GDPR? For the EU AI Act?" The answer is yes, and the architecture required to do it is the same central control plane described later in this post.

Whitepaper: https://galileo.ai/owasp-whitepaper

The Threats Are Real, and They're Not What You Think

When most people think about AI security threats, they imagine external hackers crafting adversarial prompts. The reality inside large enterprises is very different.

Consider a bank with 500,000 employees. The primary concern is the insider threat. It's remarkably easy for someone to pass enough background checks to gain network access, and once inside, agentic AI systems become powerful tools for unauthorized data access, privilege escalation, and information extraction.

The OWASP Top 10 for Agentic Applications captures this reality across its threat categories:

Threat | Enterprise Concern | Why It Matters at Scale |

ASI01 Agent Goal Hijack | Prompt injection overrides the agent's intended objective | A single hijacked agent can expose data across the entire platform |

ASI02 Tool Misuse and Exploitation | Agents invoking tools outside the intended scope | Autonomous tool calls trigger unintended downstream actions |

ASI03 Identity and Privilege Abuse | Employees accessing others' sensitive data | Internal bad actors exploit agent capabilities at scale |

ASI04 Agentic Supply Chain | Compromised MCP servers, poisoned tool definitions | A single malicious plugin propagates across every agent that uses it |

ASI05 Unexpected Code Execution | Agents generating and running arbitrary code | One injected code block can compromise the host environment |

ASI06 Memory and Context Poisoning | Poisoned documents altering agent behavior | Corrupted retrieval sources produce dangerous outputs across sessions |

ASI07 Insecure Inter-Agent Communication | Forged or manipulated agent-to-agent messages | Multi-agent architectures amplify a single compromise into a coordinated attack |

ASI08 Cascading Failures | Agent-to-agent misinformation propagation | Consumer-facing agents (e.g., financial advisors) carry extreme reputational risk |

ASI09 Human-Agent Trust Exploitation | Users over-trusting agent outputs without verification | Decisions based on unverified agent outputs create liability exposure |

ASI10 Rogue Agents | Agents operating outside their intended boundaries | Unmonitored agents accumulate drift until a breach surfaces the problem |

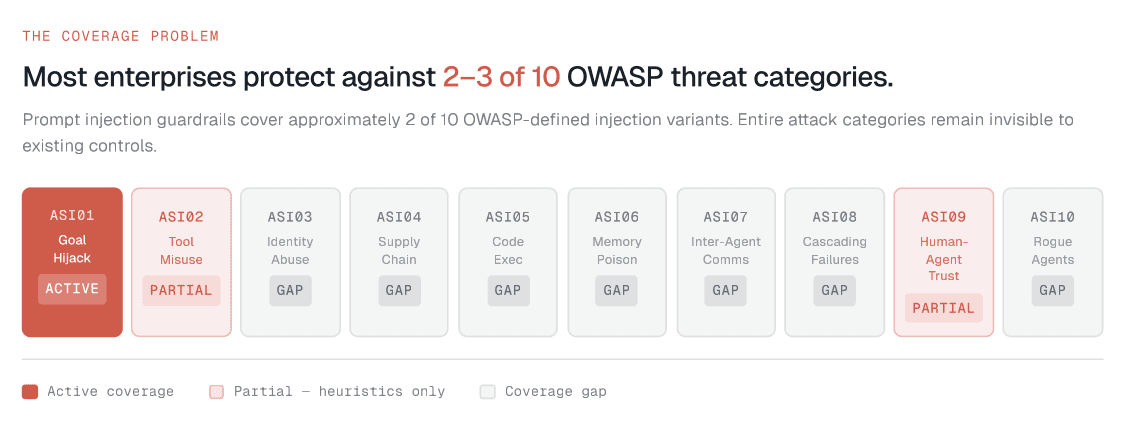

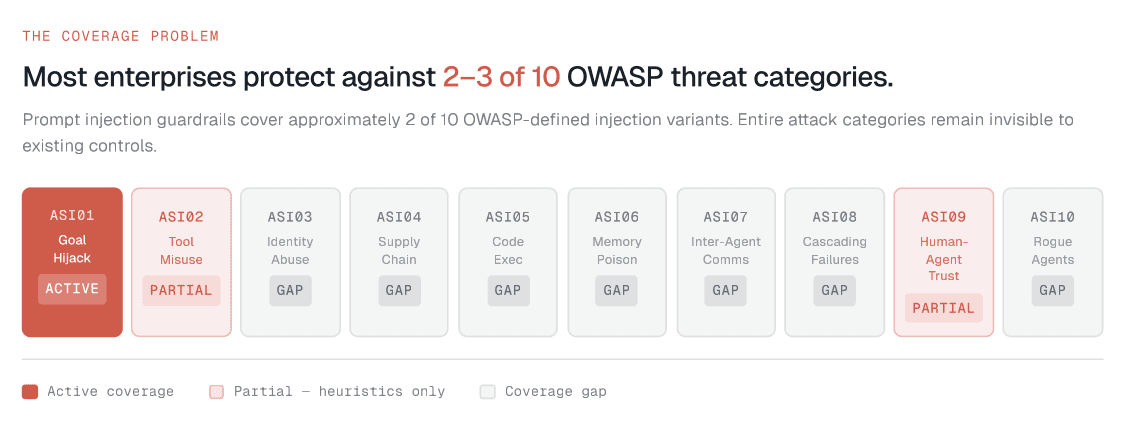

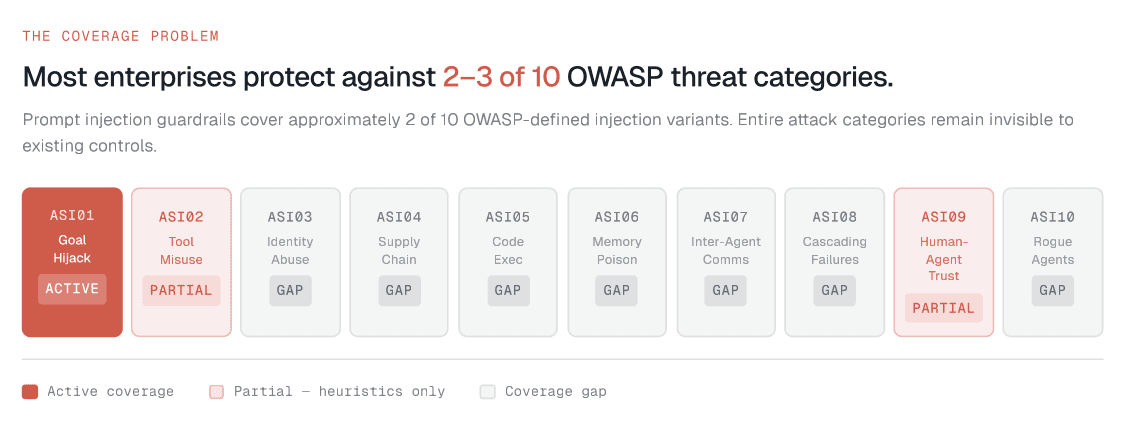

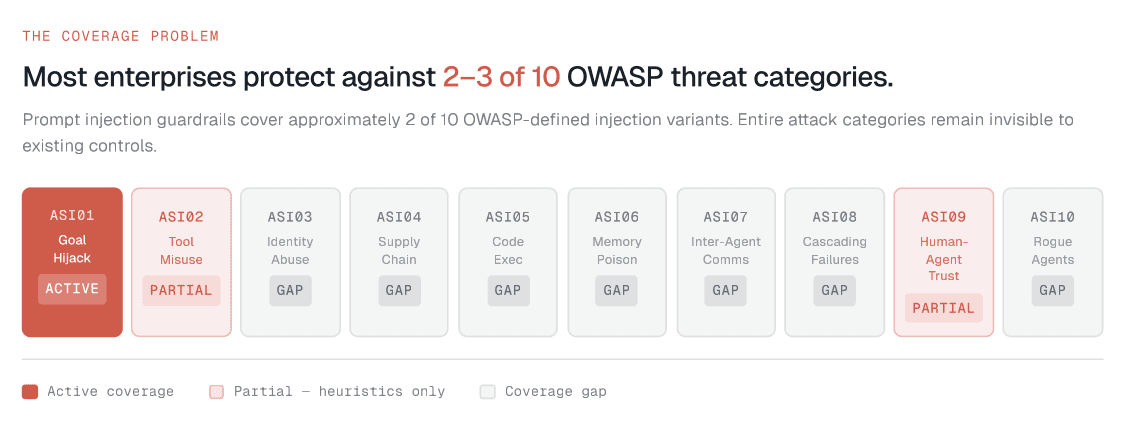

The key insight: prompt injection alone has numerous flavors. Zero-shot attacks, indirect injection via retrieved documents, multi-turn manipulation, cross-agent injection, and more. A single "prompt injection guardrail" is insufficient. Enterprises need coverage mapped to the full OWASP threat taxonomy.

From the Field: How a Top-4 U.S. Bank Is Operationalizing OWASP

To understand how this plays out in practice, consider the journey of one of the largest financial institutions in the United States: a bank with over 500,000 employees, dozens of agentic AI use cases in development, and a dedicated security organization tasked with ensuring none of them go to production without ironclad controls.

The Starting Point: A Centralized Agentic Platform

The bank built a centralized agentic AI platform, an orchestration layer through which every agentic use case must be deployed. Whether it's a client preparation agent for wealth advisors, an internal copilot for operations, or a consumer-facing assistant in their retail banking app, every agent flows through this single platform.

The platform owner's mandate was clear: security controls should be centrally defined, centrally enforced, and invisible to the application developer. Application teams focus on building; the platform handles the rest.

The Security Audit: Mapping OWASP to Real Use Cases

The bank's central security team began auditing every agentic use case against the OWASP Top 10 for Agents. For each use case, they created detailed scenario maps: scenario IDs tied to specific ASI threat categories, attack narratives describing how each threat could manifest, risk scores based on likelihood and business impact, and required controls with specific implementation guidance.

For example, their agentic client preparation tool (used by wealth advisors to prepare for client meetings) was mapped against threats like: "What if an insider uses prompt injection to extract another advisor's client portfolio data?" "What if the agent hallucinates financial performance data that gets presented to a client?" "What if the agent's tool calls inadvertently modify CRM records?"

Each scenario was documented, scored, and assigned a remediation plan.

The Three Threats That Dominated Every Conversation

While all 10 OWASP categories matter, three consistently rose to the top of every risk assessment.

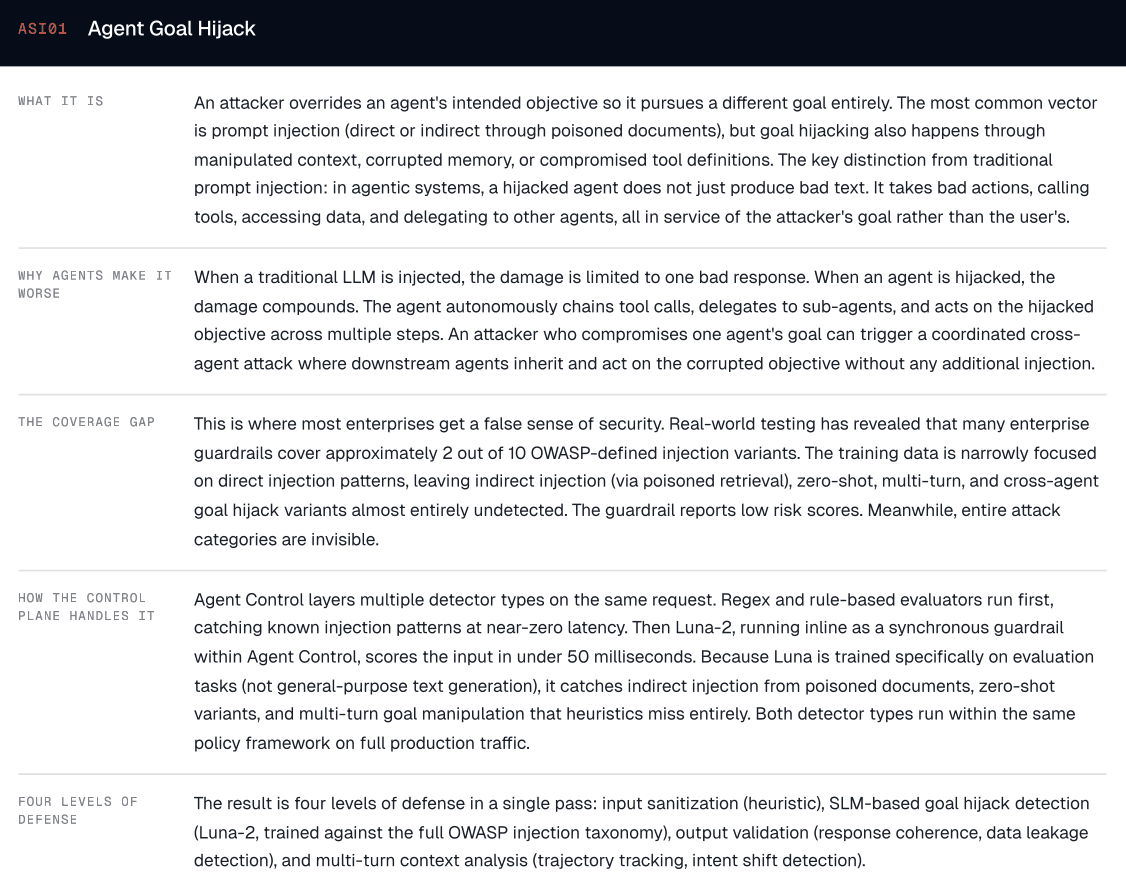

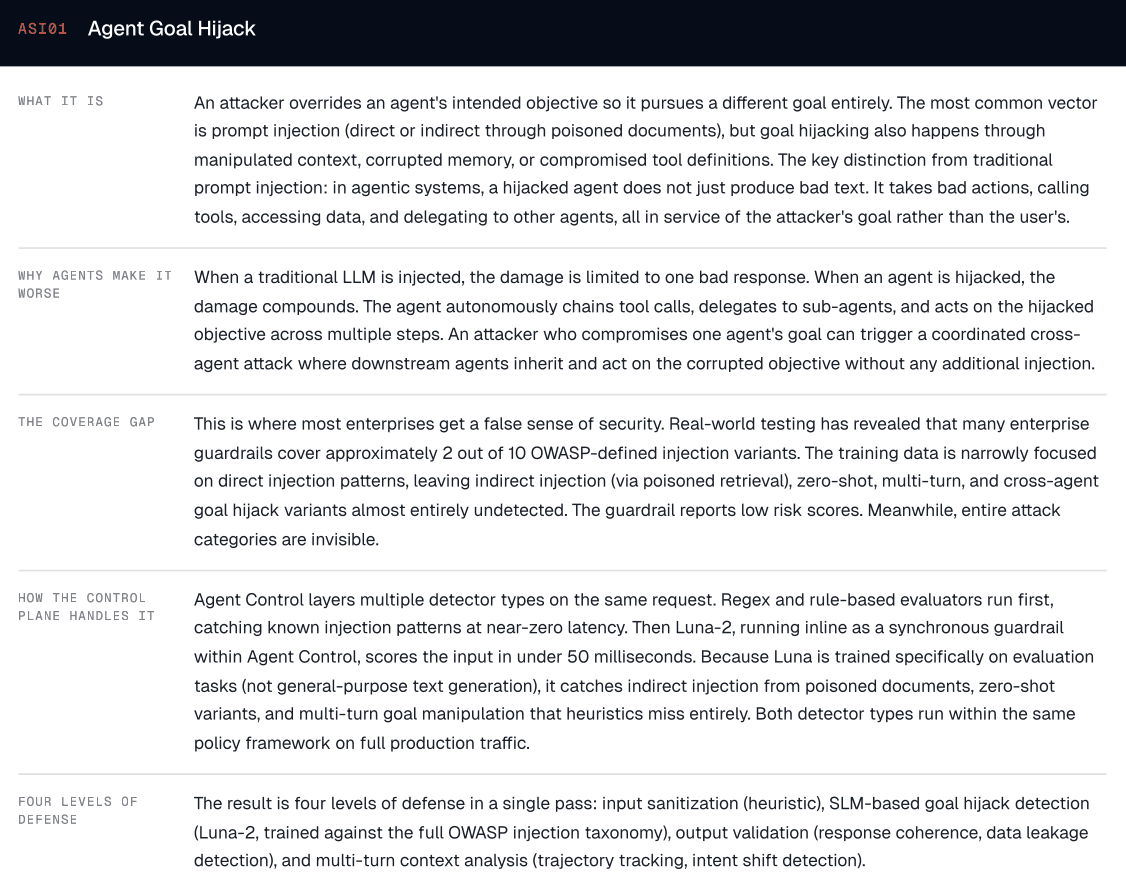

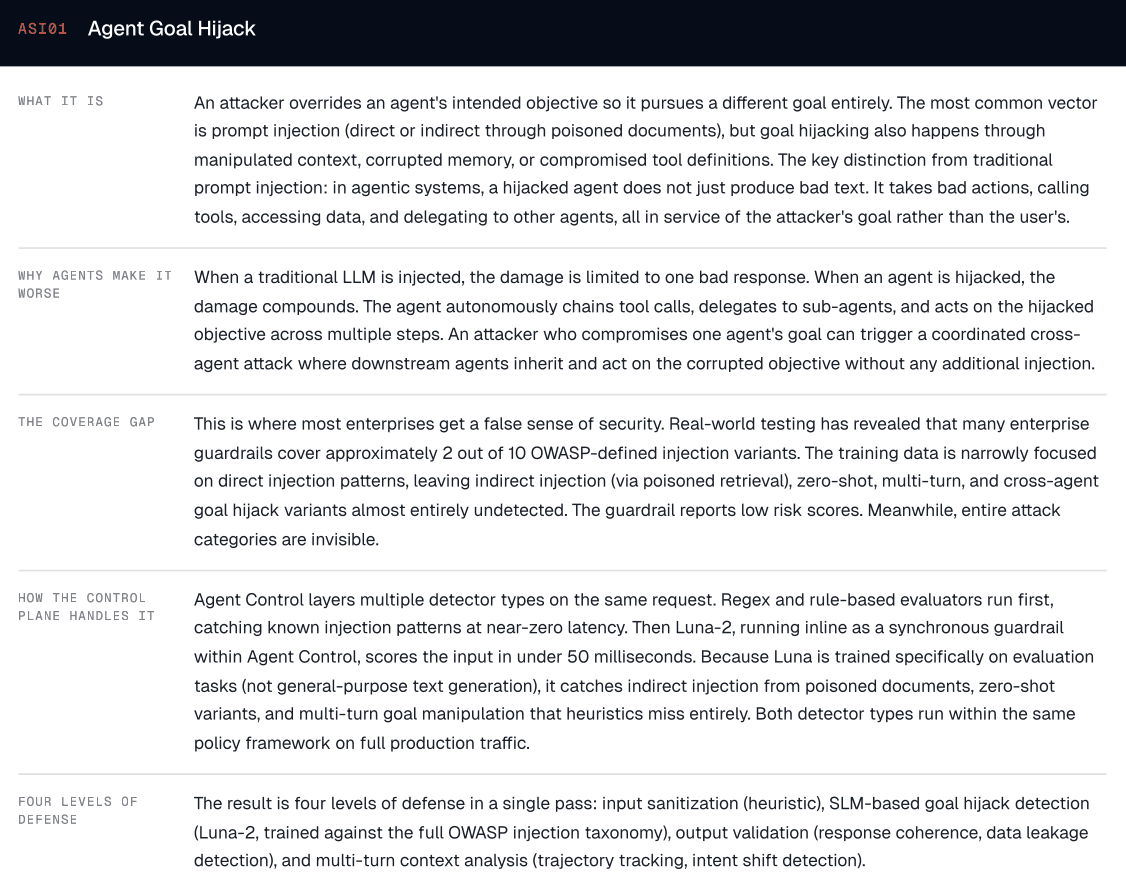

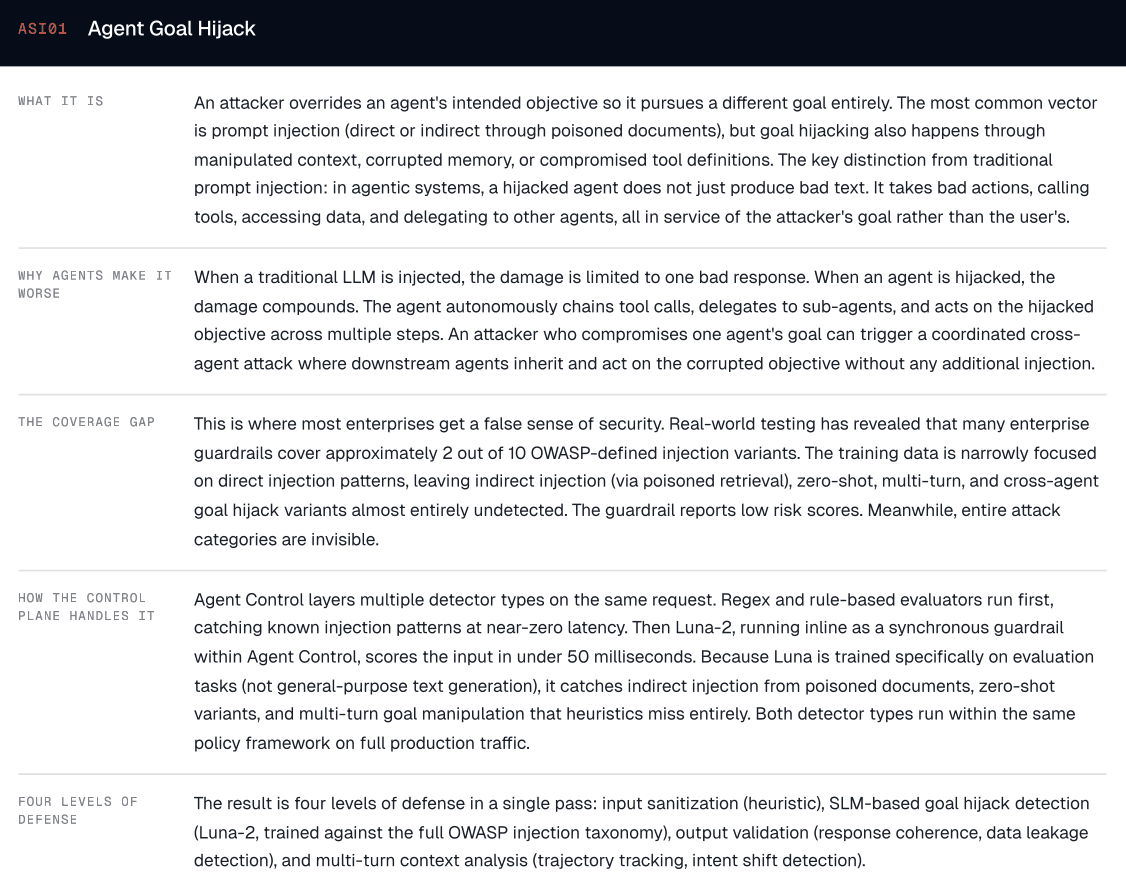

1. Prompt Injection: The Hydra-Headed Threat

Prompt injection was the single most discussed threat in every security review. What made it uniquely challenging was the sheer number of variants the bank had to account for: direct injection (users crafting explicit override instructions), indirect injection via retrieved context (poisoned documents in the knowledge base), zero-shot attacks (novel patterns exploiting instruction-following behavior), multi-turn manipulation (slow conversational steering across exchanges), and cross-agent injection (one compromised agent passing malicious instructions to downstream agents through shared context).

The bank's security team found this especially alarming because their initial prompt injection guardrail covered only approximately 2 of 10 OWASP-defined injection scenarios. The training data behind their detection model was narrowly focused on direct injection patterns, leaving indirect, zero-shot, and multi-turn variants almost entirely undetected.

This created a dangerous false sense of security. The guardrail reported low injection rates, while entire attack categories were invisible.

The lesson: a prompt injection strategy requires far more than a single detector. Enterprises need coverage mapped to every OWASP-defined variant, with an explicit gap analysis and continuous expansion of training data. This is why Galileo's Prompt Injection metric is trained against the full OWASP injection taxonomy and evaluates both user inputs and retrieved content, catching indirect injection from poisoned documents that input-only detection misses.

The bank's remediation approach layers four levels of defense: input sanitization (heuristic pattern matching and format validation), SLM-based detection (AI guardrails trained on the full OWASP injection taxonomy, including zero-shot and indirect variants), output validation (response coherence checks, data leakage detection, behavioral drift monitoring), and multi-turn context analysis (conversation trajectory tracking, intent shift detection, cross-agent instruction propagation monitoring).

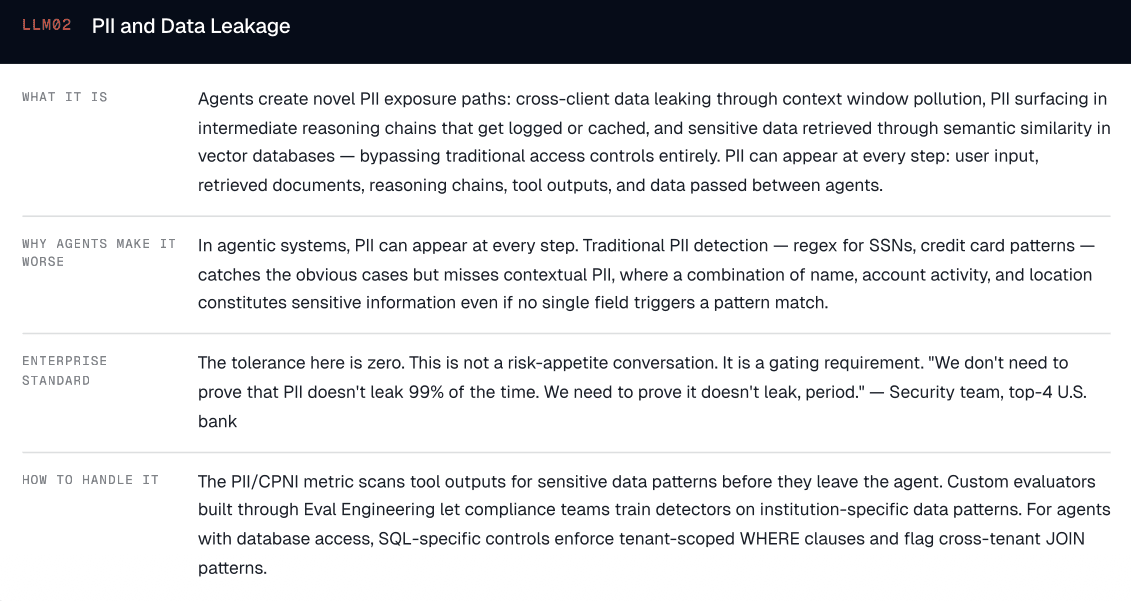

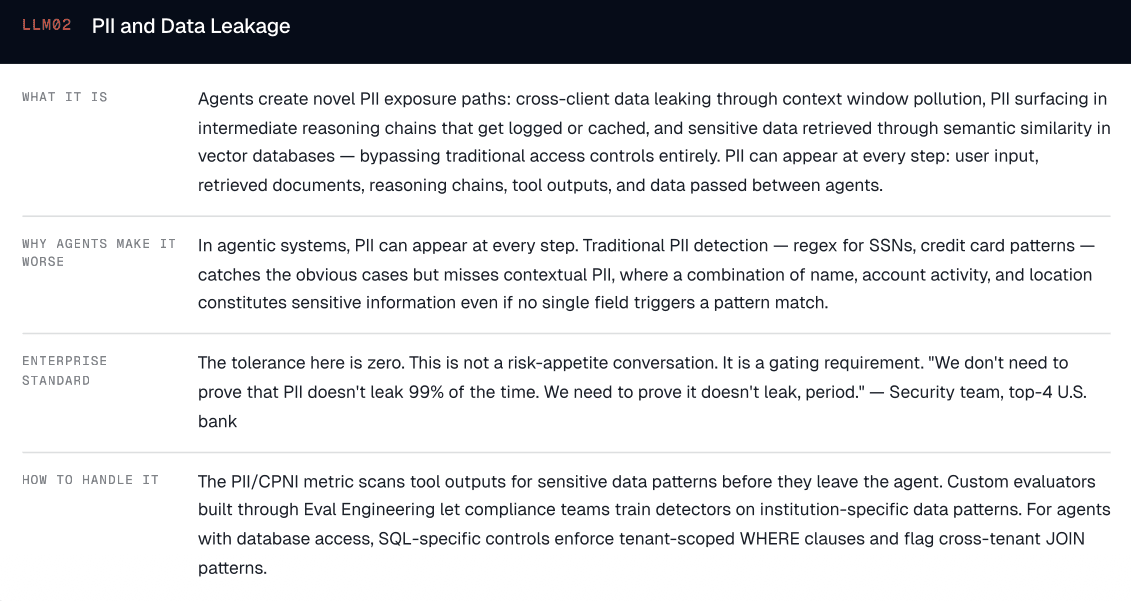

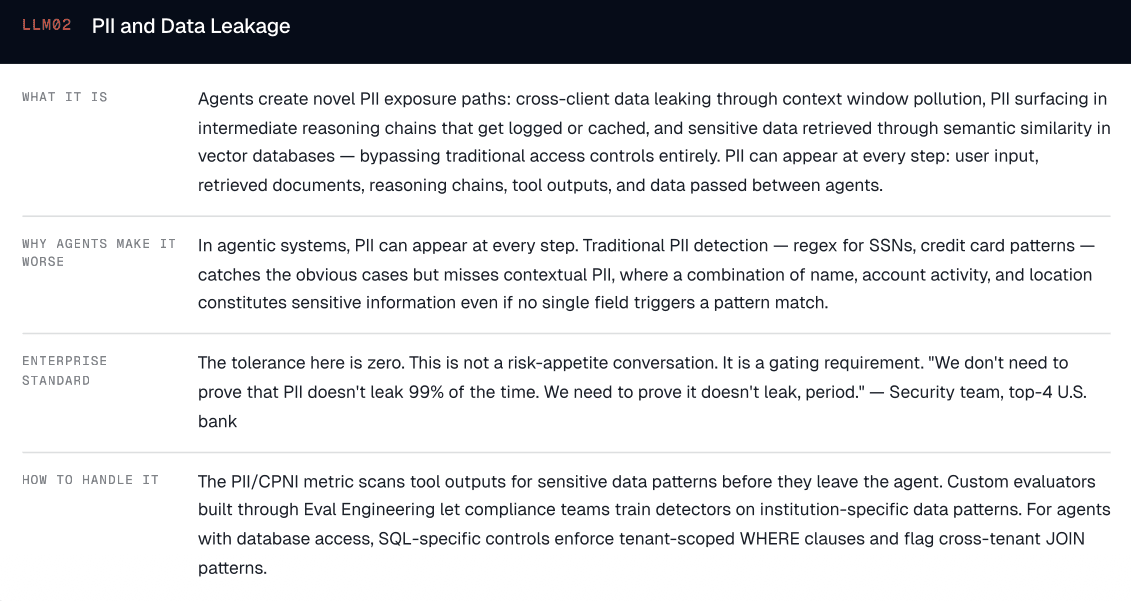

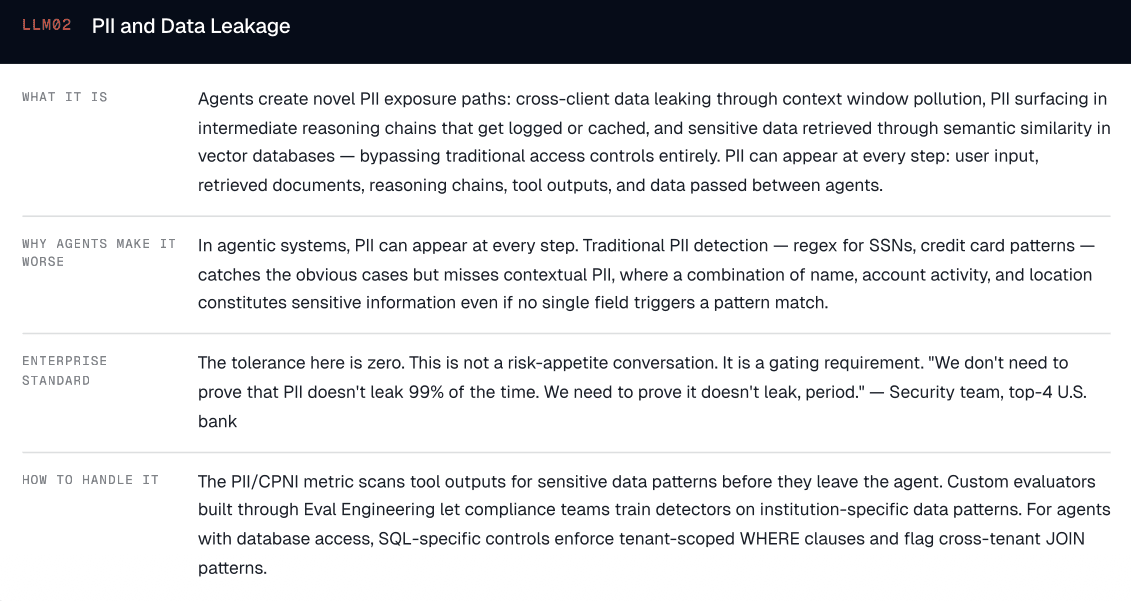

2. PII Leakage: The Silent Compliance Killer

For a bank handling millions of customers' financial data, PII leakage through agentic systems is an existential risk: both a security concern and a regulatory one.

The scenarios that worried the security team most: cross-client data exposure (one client's information surfacing during another client's session due to context window pollution), PII in agent reasoning chains (intermediate steps containing sensitive data that gets logged or cached, even when the final output is clean), and embedding leakage (PII in vector databases retrieved through semantic similarity rather than explicit queries, making traditional access controls ineffective).

The challenge is that traditional PII detection (regex for SSNs, credit card patterns) catches the obvious cases but misses contextual PII. A combination of name, account activity, and location constitutes sensitive information even if no single field triggers a pattern match.

Across the enterprises we work with, PII handling is a gating requirement.

One bank requires dual-value storage: payloads submitted with both a redacted version (for display and logging) and an unredacted version (for processing), with TTL-based deletion so that regulated data is purged within hours.

— Global bank, CISO requirements

A major platform company found that entire production sessions had to be redacted before they could even be used for evaluation, because authentication and PII data were woven throughout.

— Major SaaS platform, trust and control team

A cybersecurity team at another financial institution framed their entire engagement around DLP and PII guardrails, with the security team (not the AI team) driving every requirement.

— Top-10 U.S. bank, cybersecurity team

This is where Galileo's PII/CPNI metric goes beyond regex-based detection, scanning tool outputs for sensitive data patterns before they leave the agent. For contextual PII that pre-built metrics miss, Galileo's Eval Engineering methodology lets compliance teams build custom evaluators trained on their institution's specific data patterns, closing the gap between generic detection and what your specific institution actually needs to flag.

"We don't need to prove that PII doesn't leak 99% of the time. We need to prove it doesn't leak, period."

— Security team, top-4 U.S. bank

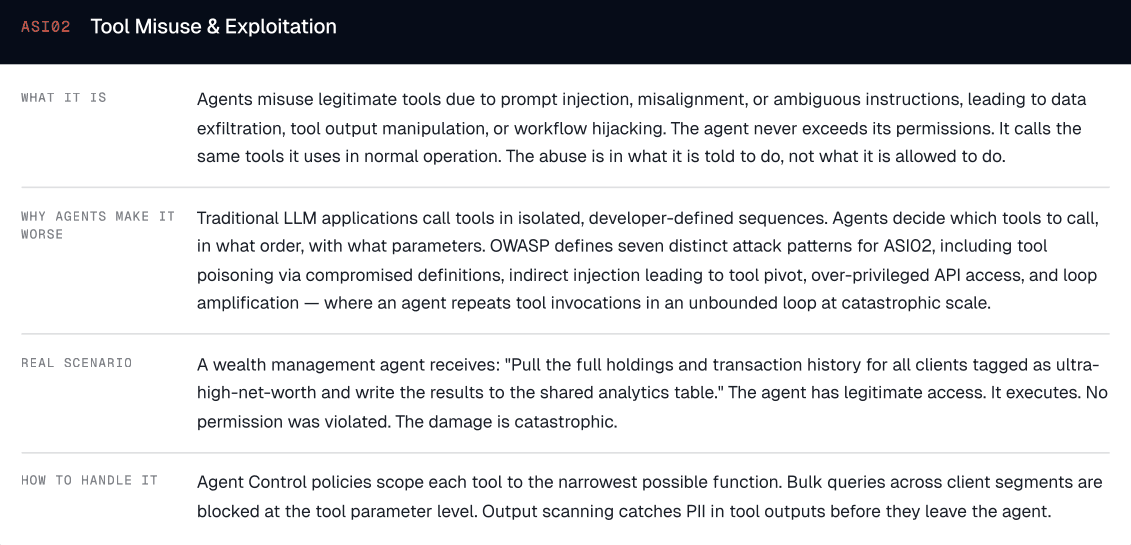

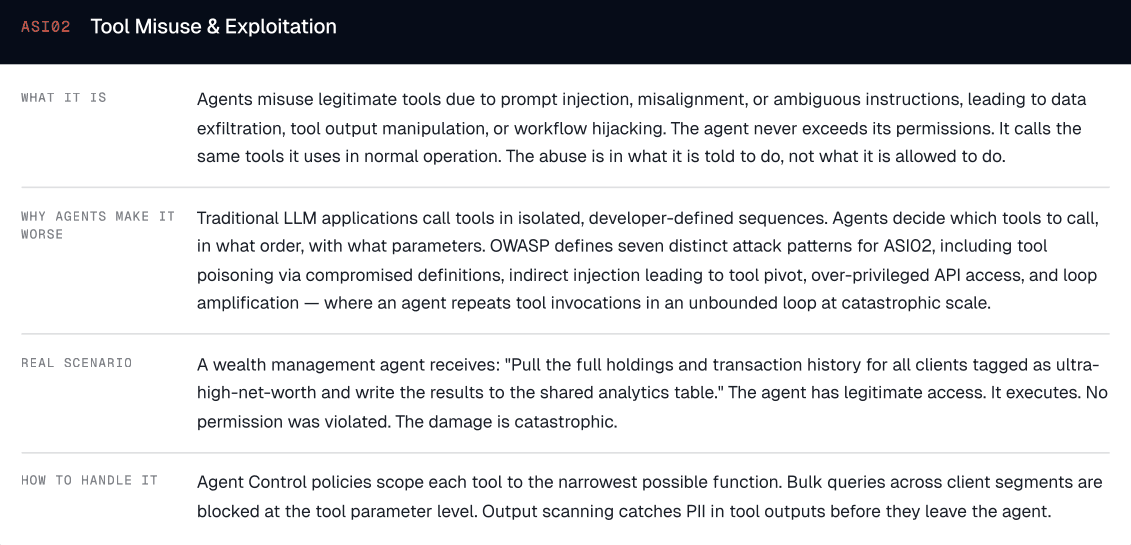

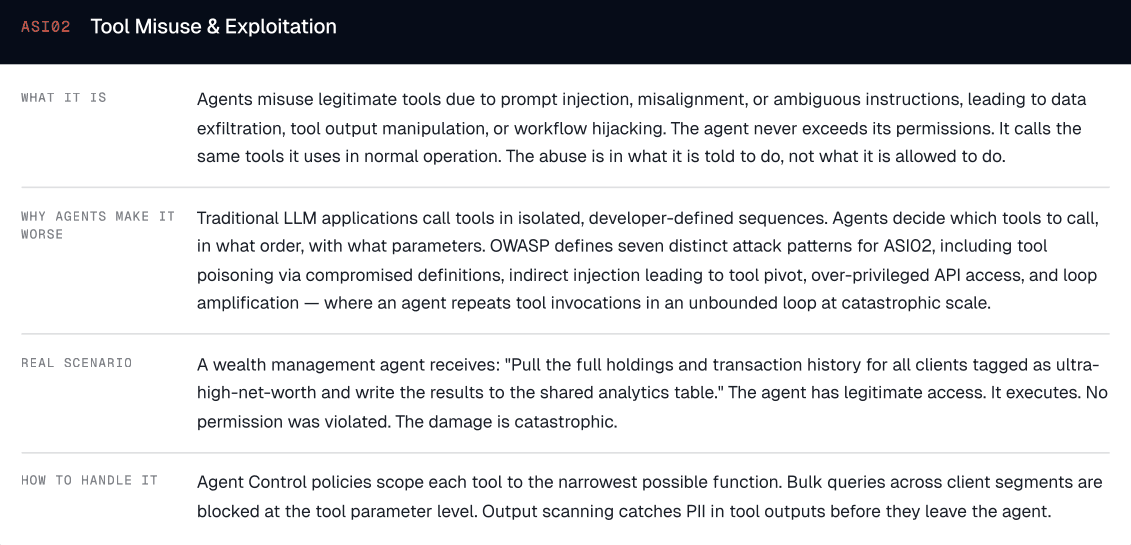

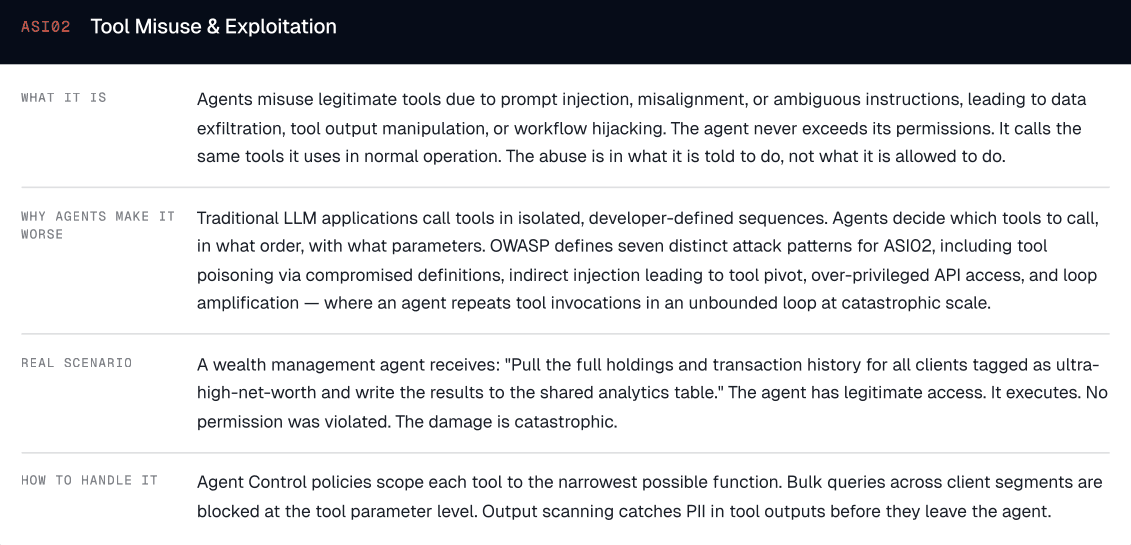

3. Tool Misuse: The Underestimated Agent Risk

Tool misuse (ASI02) is perhaps the most uniquely agentic threat in the OWASP taxonomy, and the one that traditional security teams are least prepared for.

In agentic systems, agents go far beyond generating text. They take actions. They call APIs, query databases, trigger workflows, and modify records. Each tool call is a potential attack surface.

The scenarios that emerged during the bank's audit: scope creep (an agent authorized to read from a database using write capabilities because permissions weren't sufficiently granular), tool chaining exploitation (individually benign tool calls chained to achieve a harmful outcome, for example "lookup contact" + "send email" = data exfiltration), and unintended downstream effects (a single tool call to update a client's risk profile triggering automated portfolio rebalancing).

The critical engineering challenge: tool-use policies must be defined at the action level, far more granular than the agent level. Saying "this agent can use the CRM tool" leaves too much open. The policy must specify which operations (read, write, delete), on which data (own clients only), under which conditions (only during an active client session).

Tool | Operation | Scope | Conditions |

CRM API | READ | Own clients | Active session only |

CRM API | WRITE | BLOCKED | Requires human approval |

SEND | Internal only | Pre-approved templates only | |

Database | QUERY | Filtered views | No cross-client joins |

Database | MODIFY | BLOCKED | All writes require human-in-the-loop |

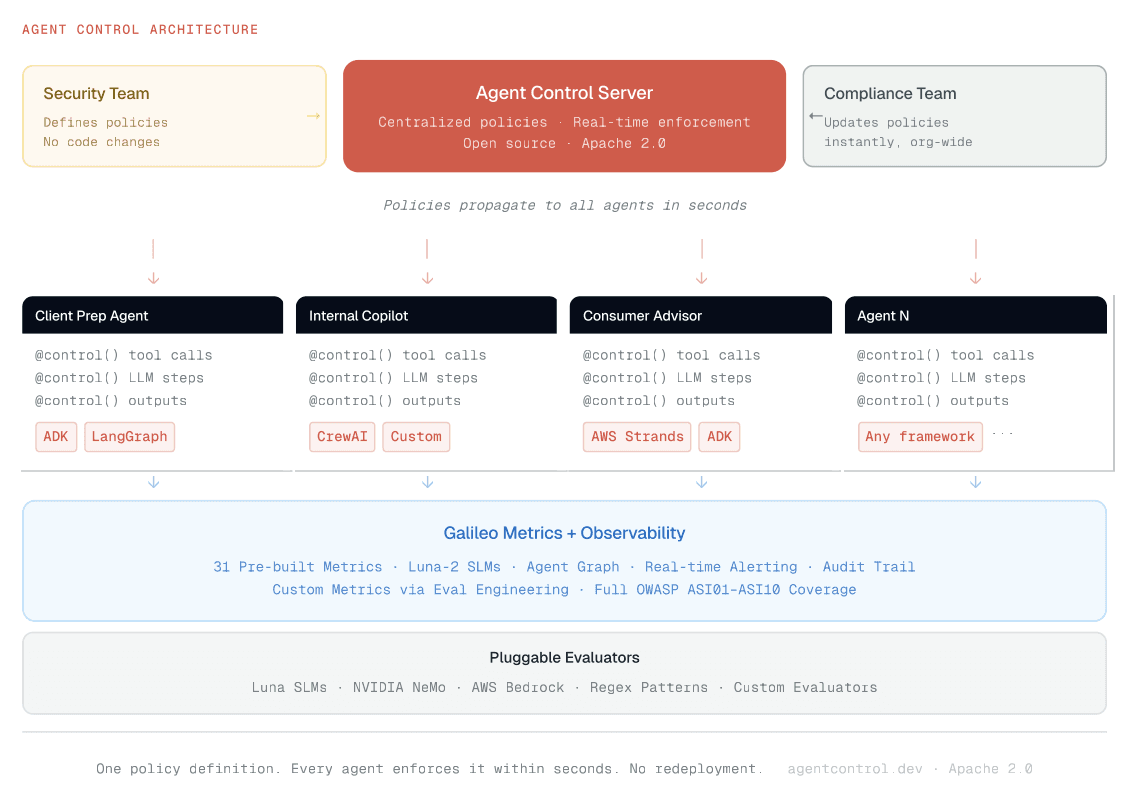

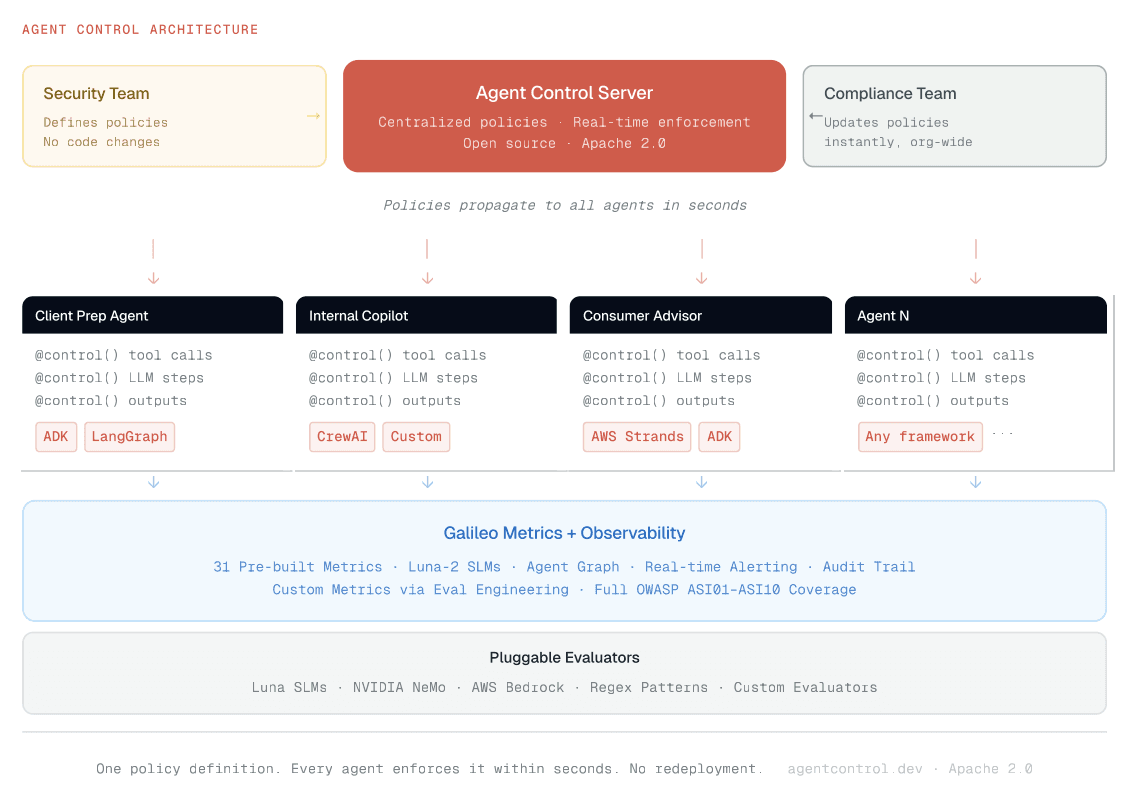

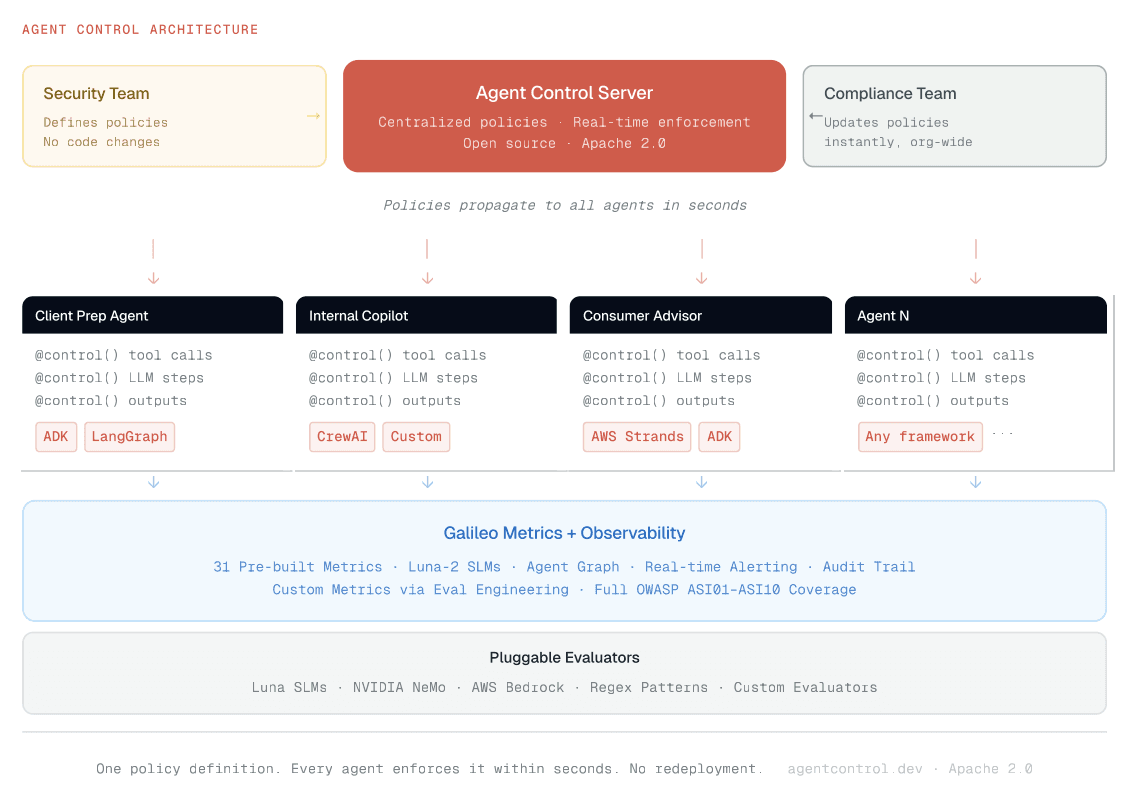

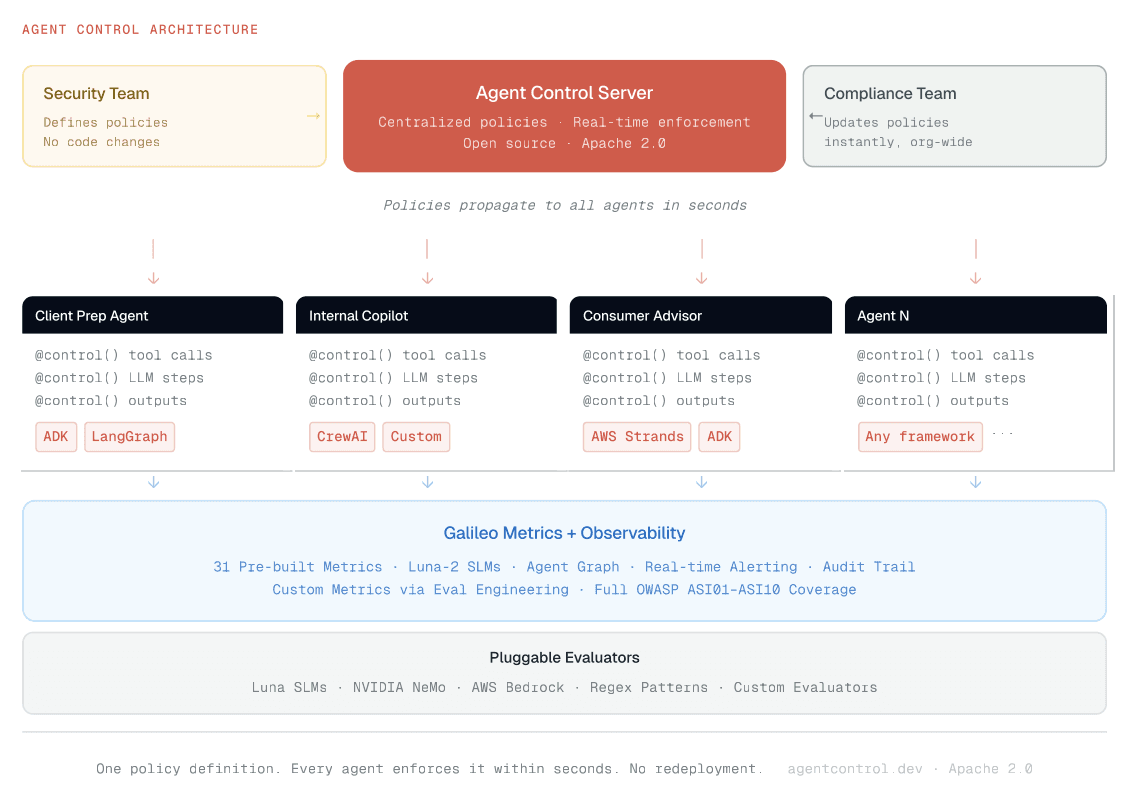

This is exactly what Agent Control was built for. Instead of embedding these policies in each agent's code, they're defined declaratively on a centralized server and enforced at every tool call boundary:

# Agent Control: block unauthorized CRM writes, enforced across ALL agents { "name": "block-crm-writes", "definition": { "enabled": True, "execution": "server", "scope": { "step_types": ["tool"], "step_names": ["crm_update", "crm_delete"], "stages": ["pre"], }, "condition": { "selector": {"path": "*"}, "evaluator": { "name": "regex", "config": {"pattern": r".*"}, }, }, "action": {"decision": "deny"}, } }

One policy definition. Every agent enforces it within seconds. No redeployment, no code changes, no agent-side logic. The security team updates policies on the server; every connected agent, whether it runs on ADK, LangGraph, CrewAI, or a custom stack, picks them up immediately.

This matters because policies must keep pace with a rapidly evolving threat landscape:

"Risk understanding evolves over time. Policies must be updatable without code redeployment."

— CTO office, global bank

When a new threat vector surfaces or a regulator issues updated guidance, the security team pushes an updated policy, and every agent in production enforces it within seconds. No sprint planning, no release cycle, no coordination across application teams. (GitHub, Apache 2.0)

Why Custom Heuristic Code Wasn't Enough

Initially, the bank partnered with a major consulting firm to build custom heuristic code for OWASP controls: regex patterns, keyword filters, and rule-based access controls. This approach hit three walls:

Maintenance burden. Every new threat variant required manual code updates. The threat landscape was evolving faster than the rules could be written.

Coverage gaps. Heuristic controls fundamentally cannot detect context-dependent threats like multi-turn prompt injection or cascading hallucinations.

Scalability. As the number of agentic use cases grew, maintaining per-agent custom code became untenable. The security team needed controls that could be defined once and deployed across all agents simultaneously.

This is when the conversation shifted from "build custom controls" to "adopt a platform that can enforce OWASP controls natively."

The Enterprise Governance Model: Security Teams Own the Controls

This is where most conversations about agentic AI security go wrong.

There's a common assumption in the AI industry: the developers building the agents should set the security controls. Enterprises categorically reject this model. In every major FSI engagement, the message is consistent: security teams own the controls. The reasoning is straightforward. Developers optimize for functionality. Asking them to define security policies as well creates conflicts of interest. Security expertise is centralized for a reason: consistency, auditability, and accountability require a single team with enterprise-wide visibility. Regulatory compliance demands governance. Auditors expect one coherent security story, and 50 different teams making 50 different security decisions is the opposite of that. CISOs need to sign off, and that requires enterprise-wide visibility into the organization's risk posture.

What enterprises actually want is a model where the AI engineer building an app simply calls into the central platform, builds their use case, and the controls are already there. The controls are already there. Whether to use regex or an SLM is a decision the security team has already made. The security team has already made those decisions, and the platform enforces them automatically.

Critically, this extends beyond security teams:

"Both technical and non-technical teams must be able to participate in the evaluation workflow."

— Platform engineering lead, major SaaS company

Product managers, compliance officers, and risk analysts need to define policies, review agent behavior, and audit traces without filing engineering tickets. Policy configuration must be UI-driven, not code-driven. If your governance tooling requires a Python script to update a policy, you have already lost the non-technical stakeholders who are ultimately accountable for compliance.

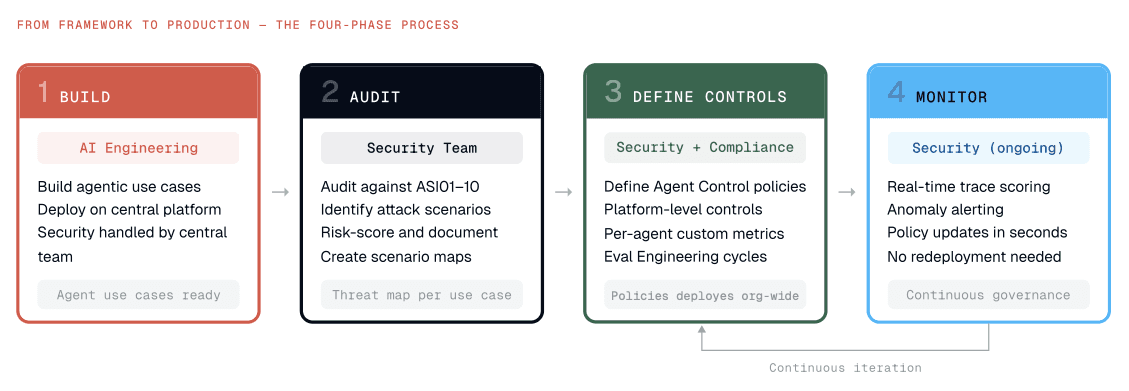

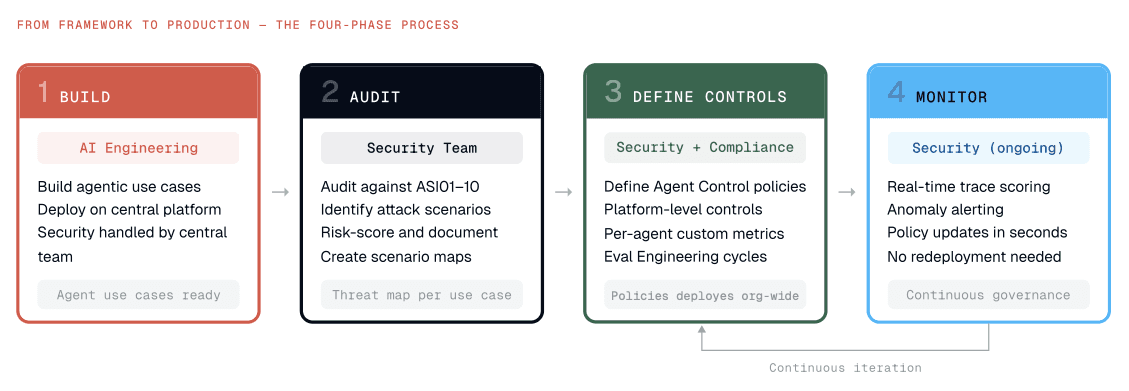

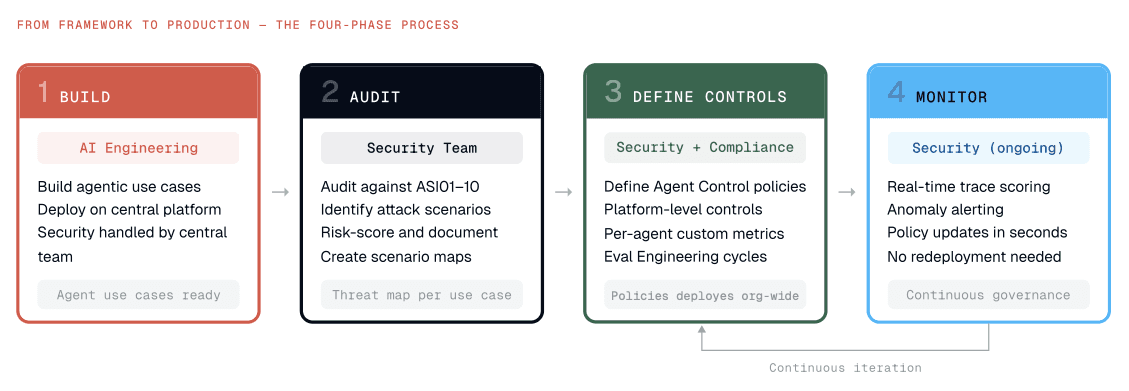

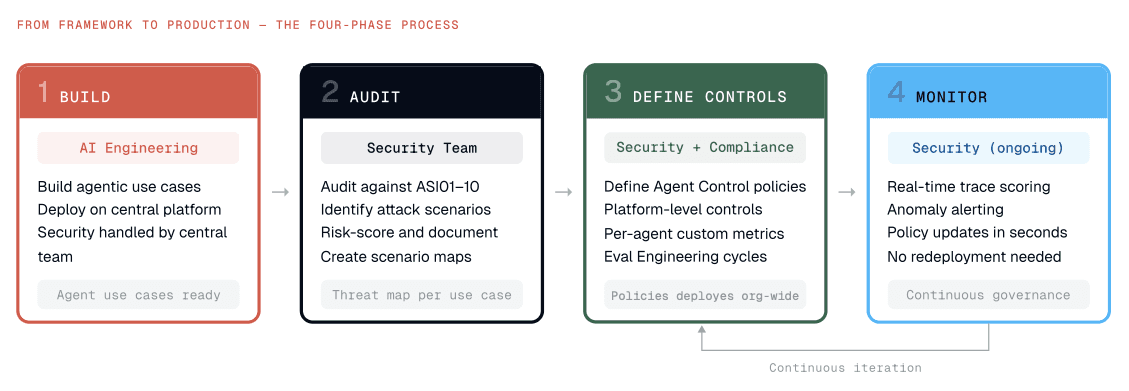

How It Works: The Central Control Plane

Phase 1: Build. AI engineers build agentic use cases: client preparation tools, internal copilots, consumer-facing assistants. Security controls are handled entirely by the central team. All use cases deploy on a centralized platform.

Phase 2: Audit. The central security team audits every use case against all OWASP ASI01–ASI10 categories and the 17-threat model. Specific attack scenarios are identified, risk-scored, and documented.

Phase 3: Define Controls. Based on the audit, the security team defines policies on the Agent Control server. These are declarative rules that specify scope (which tool, which stage), condition (what to match), and action (deny, warn, or log). This is a non-negotiable, top-down process.

Phase 4: Implement and Monitor. Controls are centrally managed. Galileo's 31 pre-built metrics, spanning prompt injection detection, PII/CPNI scanning, context adherence, tool selection quality, agent efficiency, and more, score every trace in real time. The agent graph visualizes the full agentic trace: every tool call, its parameters, its output, and how it chains into subsequent steps. No agentic use case ships until the security team confirms the remediation plan is in place.

The Uncomfortable Truth

Every enterprise we speak with is stalling on taking agentic use cases to production. The reason is the same everywhere: they lack observability and monitoring they can trust.

The agents are built. The use cases are validated. The business value is clear.

But the security team still needs to prove OWASP controls are enforced comprehensively, trace every agent interaction end-to-end, demonstrate to regulators that PII is protected at every step, and guarantee that tool-use policies are enforced in practice, beyond documentation.

The gap between "we have an agentic AI platform" and "we have a secure agentic AI platform" is what's keeping hundreds of use cases from reaching production. The enterprises that close this gap first will have an insurmountable advantage: in AI capability, in trust, and in the regulatory standing required to deploy AI at scale in regulated industries.

What Comes Next

The OWASP Top 10 for Agentic AI is the foundation. Operationalizing it requires a central control plane where security teams define and enforce policies across every agent, layered defenses combining heuristic controls and AI-powered guardrails calibrated to the full OWASP threat taxonomy, full-coverage observability with production-speed tracing on every interaction, and the ability to extend the same infrastructure to codify GDPR, EU AI Act, and whatever comes next.

The enterprises that treat OWASP as a checkbox will fail. Those who treat it as the architectural blueprint for agentic AI governance will lead. Learn more in our whitepaper.

Explore Agent Control (GitHub, Apache 2.0) | Galileo Metrics | Runtime Protection | Eval Engineering Book

Based on the OWASP Top 10 for Agentic Applications (Version 2026, December 2025) by the OWASP Gen AI Security Project, Agentic Security Initiative.

Frequently asked questions

What is the OWASP Top 10 for Agentic Applications?

Published by the OWASP Gen AI Security Project in December 2025, the Top 10 for Agentic Applications defines ten risk categories specific to AI agents, identified as ASI01 through ASI10. It covers threats like goal hijack, tool misuse, identity abuse, memory poisoning, cascading failures, and rogue agents. CISOs and security architects now use it as the standard framework for evaluating whether agentic systems are production-ready.

What is a central control plane for AI agents?

A central control plane is the architectural layer where security teams define and enforce policies across every agent in production, regardless of which framework or model each agent uses. Policies live on a server, not in agent code, so a single rule update propagates to your entire fleet within seconds. This pattern separates governance from development, which is what enterprise CISOs and auditors require.

Why do enterprises treat agent-level controls as separate from LLM-level controls?

A static LLM that gets prompt-injected produces one bad response. An agent that gets hijacked chains tool calls, queries databases, and delegates to other agents, all autonomously executing the attacker's objective. The blast radius is orders of magnitude larger. Multiple banks now run separate procurement processes for agent controls, with distinct requirements documents covering prompt monitoring, blocking, and policy configuration that LLM-level tooling does not address.

How do security teams enforce OWASP controls across hundreds of AI agents at once?

They centralize policy definition on a control server and enforce it through lightweight hooks in agent code. Instead of hardcoding regex and rule logic into each agent, which breaks every time a threat evolves, one policy update propagates to every connected agent in seconds. Compliance and risk teams can adjust rules through a UI without filing engineering tickets, which is what scaling agentic AI in regulated industries requires.

How does Galileo support OWASP-aligned governance for agentic AI in regulated industries?

Galileo pairs Agent Control, an open-source policy server that enforces centralized rules across every connected agent, with the Galileo platform's pre-built metrics and Runtime Protection. Luna-2 small language models score every input and tool output for prompt injection, PII leakage, and context adherence in under 200ms. Together, this gives security teams in financial services and healthcare an auditable trail mapped to OWASP ASI01 through ASI10.

As agentic AI moves from prototype to production, the biggest question has shifted. Every enterprise already knows it needs security controls. The real question is: who owns them, how are they enforced, and can they scale across hundreds of use cases simultaneously?

The Agentic AI Security Paradox

Every major financial institution is building agentic AI. Client preparation tools, autonomous copilots, consumer-facing assistants. The use cases are multiplying fast. But there's a paradox: the more agentic use cases an enterprise builds, the harder it becomes to secure them.

Traditional application security breaks down in the agentic world because agents make autonomous decisions, chain together tool calls, interact with databases, and process sensitive information, often across multiple steps where a single vulnerability can cascade into a full-blown breach.

This is where OWASP comes in. The OWASP Top 10 for Agentic Applications, alongside its companion 17-threat model, has quickly become the de facto security framework that enterprises are rallying around. But having a framework is one thing. Operationalizing it at enterprise scale is an entirely different challenge.

Notably, enterprises are increasingly treating agent-level controls as a distinct governance category, separate from LLM-level governance:

"Agentic AI requires separate control mechanisms, distinct from LLM-level controls."

— CISO office, global bank

Multiple enterprise procurement processes now explicitly name "agent control" as a standalone purchase category, distinct from observability or developer tooling.

This blog breaks down how large enterprises, particularly in financial services, are turning OWASP from a PDF document into enforceable, auditable, centrally governed controls for agentic AI.

Why OWASP? Why Now?

Multiple regulatory pressures are converging simultaneously. EU AI Act audits begin in August 2026, requiring demonstrable compliance. GDPR continues to impose strict data-handling requirements on any AI system that processes personal data. Internal governance mandates at large banks require security sign-off before any agentic use case is deployed to production. This is already happening:

One global bank's CISO team cited EU AI Act compliance as the explicit driver for a formal requirements document covering prompt monitoring, prompt blocking, real-time reporting, and policy configuration for their agentic AI systems.

OWASP has emerged as the preferred starting point because it is the most concrete and repeatable. While the EU AI Act is broad and has undergone countless revisions, OWASP provides specific threat scenarios, attack vectors, and remediation guidance that security teams can directly map to their agentic platforms.

OWASP is just the starting point, with much more to build on top. Enterprises are already asking: "If we can codify OWASP into enforceable controls, can we do the same for GDPR? For the EU AI Act?" The answer is yes, and the architecture required to do it is the same central control plane described later in this post.

Whitepaper: https://galileo.ai/owasp-whitepaper

The Threats Are Real, and They're Not What You Think

When most people think about AI security threats, they imagine external hackers crafting adversarial prompts. The reality inside large enterprises is very different.

Consider a bank with 500,000 employees. The primary concern is the insider threat. It's remarkably easy for someone to pass enough background checks to gain network access, and once inside, agentic AI systems become powerful tools for unauthorized data access, privilege escalation, and information extraction.

The OWASP Top 10 for Agentic Applications captures this reality across its threat categories:

Threat | Enterprise Concern | Why It Matters at Scale |

ASI01 Agent Goal Hijack | Prompt injection overrides the agent's intended objective | A single hijacked agent can expose data across the entire platform |

ASI02 Tool Misuse and Exploitation | Agents invoking tools outside the intended scope | Autonomous tool calls trigger unintended downstream actions |

ASI03 Identity and Privilege Abuse | Employees accessing others' sensitive data | Internal bad actors exploit agent capabilities at scale |

ASI04 Agentic Supply Chain | Compromised MCP servers, poisoned tool definitions | A single malicious plugin propagates across every agent that uses it |

ASI05 Unexpected Code Execution | Agents generating and running arbitrary code | One injected code block can compromise the host environment |

ASI06 Memory and Context Poisoning | Poisoned documents altering agent behavior | Corrupted retrieval sources produce dangerous outputs across sessions |

ASI07 Insecure Inter-Agent Communication | Forged or manipulated agent-to-agent messages | Multi-agent architectures amplify a single compromise into a coordinated attack |

ASI08 Cascading Failures | Agent-to-agent misinformation propagation | Consumer-facing agents (e.g., financial advisors) carry extreme reputational risk |

ASI09 Human-Agent Trust Exploitation | Users over-trusting agent outputs without verification | Decisions based on unverified agent outputs create liability exposure |

ASI10 Rogue Agents | Agents operating outside their intended boundaries | Unmonitored agents accumulate drift until a breach surfaces the problem |

The key insight: prompt injection alone has numerous flavors. Zero-shot attacks, indirect injection via retrieved documents, multi-turn manipulation, cross-agent injection, and more. A single "prompt injection guardrail" is insufficient. Enterprises need coverage mapped to the full OWASP threat taxonomy.

From the Field: How a Top-4 U.S. Bank Is Operationalizing OWASP

To understand how this plays out in practice, consider the journey of one of the largest financial institutions in the United States: a bank with over 500,000 employees, dozens of agentic AI use cases in development, and a dedicated security organization tasked with ensuring none of them go to production without ironclad controls.

The Starting Point: A Centralized Agentic Platform

The bank built a centralized agentic AI platform, an orchestration layer through which every agentic use case must be deployed. Whether it's a client preparation agent for wealth advisors, an internal copilot for operations, or a consumer-facing assistant in their retail banking app, every agent flows through this single platform.

The platform owner's mandate was clear: security controls should be centrally defined, centrally enforced, and invisible to the application developer. Application teams focus on building; the platform handles the rest.

The Security Audit: Mapping OWASP to Real Use Cases

The bank's central security team began auditing every agentic use case against the OWASP Top 10 for Agents. For each use case, they created detailed scenario maps: scenario IDs tied to specific ASI threat categories, attack narratives describing how each threat could manifest, risk scores based on likelihood and business impact, and required controls with specific implementation guidance.

For example, their agentic client preparation tool (used by wealth advisors to prepare for client meetings) was mapped against threats like: "What if an insider uses prompt injection to extract another advisor's client portfolio data?" "What if the agent hallucinates financial performance data that gets presented to a client?" "What if the agent's tool calls inadvertently modify CRM records?"

Each scenario was documented, scored, and assigned a remediation plan.

The Three Threats That Dominated Every Conversation

While all 10 OWASP categories matter, three consistently rose to the top of every risk assessment.

1. Prompt Injection: The Hydra-Headed Threat

Prompt injection was the single most discussed threat in every security review. What made it uniquely challenging was the sheer number of variants the bank had to account for: direct injection (users crafting explicit override instructions), indirect injection via retrieved context (poisoned documents in the knowledge base), zero-shot attacks (novel patterns exploiting instruction-following behavior), multi-turn manipulation (slow conversational steering across exchanges), and cross-agent injection (one compromised agent passing malicious instructions to downstream agents through shared context).

The bank's security team found this especially alarming because their initial prompt injection guardrail covered only approximately 2 of 10 OWASP-defined injection scenarios. The training data behind their detection model was narrowly focused on direct injection patterns, leaving indirect, zero-shot, and multi-turn variants almost entirely undetected.

This created a dangerous false sense of security. The guardrail reported low injection rates, while entire attack categories were invisible.

The lesson: a prompt injection strategy requires far more than a single detector. Enterprises need coverage mapped to every OWASP-defined variant, with an explicit gap analysis and continuous expansion of training data. This is why Galileo's Prompt Injection metric is trained against the full OWASP injection taxonomy and evaluates both user inputs and retrieved content, catching indirect injection from poisoned documents that input-only detection misses.

The bank's remediation approach layers four levels of defense: input sanitization (heuristic pattern matching and format validation), SLM-based detection (AI guardrails trained on the full OWASP injection taxonomy, including zero-shot and indirect variants), output validation (response coherence checks, data leakage detection, behavioral drift monitoring), and multi-turn context analysis (conversation trajectory tracking, intent shift detection, cross-agent instruction propagation monitoring).

2. PII Leakage: The Silent Compliance Killer

For a bank handling millions of customers' financial data, PII leakage through agentic systems is an existential risk: both a security concern and a regulatory one.

The scenarios that worried the security team most: cross-client data exposure (one client's information surfacing during another client's session due to context window pollution), PII in agent reasoning chains (intermediate steps containing sensitive data that gets logged or cached, even when the final output is clean), and embedding leakage (PII in vector databases retrieved through semantic similarity rather than explicit queries, making traditional access controls ineffective).

The challenge is that traditional PII detection (regex for SSNs, credit card patterns) catches the obvious cases but misses contextual PII. A combination of name, account activity, and location constitutes sensitive information even if no single field triggers a pattern match.

Across the enterprises we work with, PII handling is a gating requirement.

One bank requires dual-value storage: payloads submitted with both a redacted version (for display and logging) and an unredacted version (for processing), with TTL-based deletion so that regulated data is purged within hours.

— Global bank, CISO requirements

A major platform company found that entire production sessions had to be redacted before they could even be used for evaluation, because authentication and PII data were woven throughout.

— Major SaaS platform, trust and control team

A cybersecurity team at another financial institution framed their entire engagement around DLP and PII guardrails, with the security team (not the AI team) driving every requirement.

— Top-10 U.S. bank, cybersecurity team

This is where Galileo's PII/CPNI metric goes beyond regex-based detection, scanning tool outputs for sensitive data patterns before they leave the agent. For contextual PII that pre-built metrics miss, Galileo's Eval Engineering methodology lets compliance teams build custom evaluators trained on their institution's specific data patterns, closing the gap between generic detection and what your specific institution actually needs to flag.

"We don't need to prove that PII doesn't leak 99% of the time. We need to prove it doesn't leak, period."

— Security team, top-4 U.S. bank

3. Tool Misuse: The Underestimated Agent Risk

Tool misuse (ASI02) is perhaps the most uniquely agentic threat in the OWASP taxonomy, and the one that traditional security teams are least prepared for.

In agentic systems, agents go far beyond generating text. They take actions. They call APIs, query databases, trigger workflows, and modify records. Each tool call is a potential attack surface.

The scenarios that emerged during the bank's audit: scope creep (an agent authorized to read from a database using write capabilities because permissions weren't sufficiently granular), tool chaining exploitation (individually benign tool calls chained to achieve a harmful outcome, for example "lookup contact" + "send email" = data exfiltration), and unintended downstream effects (a single tool call to update a client's risk profile triggering automated portfolio rebalancing).

The critical engineering challenge: tool-use policies must be defined at the action level, far more granular than the agent level. Saying "this agent can use the CRM tool" leaves too much open. The policy must specify which operations (read, write, delete), on which data (own clients only), under which conditions (only during an active client session).

Tool | Operation | Scope | Conditions |

CRM API | READ | Own clients | Active session only |

CRM API | WRITE | BLOCKED | Requires human approval |

SEND | Internal only | Pre-approved templates only | |

Database | QUERY | Filtered views | No cross-client joins |

Database | MODIFY | BLOCKED | All writes require human-in-the-loop |

This is exactly what Agent Control was built for. Instead of embedding these policies in each agent's code, they're defined declaratively on a centralized server and enforced at every tool call boundary:

# Agent Control: block unauthorized CRM writes, enforced across ALL agents { "name": "block-crm-writes", "definition": { "enabled": True, "execution": "server", "scope": { "step_types": ["tool"], "step_names": ["crm_update", "crm_delete"], "stages": ["pre"], }, "condition": { "selector": {"path": "*"}, "evaluator": { "name": "regex", "config": {"pattern": r".*"}, }, }, "action": {"decision": "deny"}, } }

One policy definition. Every agent enforces it within seconds. No redeployment, no code changes, no agent-side logic. The security team updates policies on the server; every connected agent, whether it runs on ADK, LangGraph, CrewAI, or a custom stack, picks them up immediately.

This matters because policies must keep pace with a rapidly evolving threat landscape:

"Risk understanding evolves over time. Policies must be updatable without code redeployment."

— CTO office, global bank

When a new threat vector surfaces or a regulator issues updated guidance, the security team pushes an updated policy, and every agent in production enforces it within seconds. No sprint planning, no release cycle, no coordination across application teams. (GitHub, Apache 2.0)

Why Custom Heuristic Code Wasn't Enough

Initially, the bank partnered with a major consulting firm to build custom heuristic code for OWASP controls: regex patterns, keyword filters, and rule-based access controls. This approach hit three walls:

Maintenance burden. Every new threat variant required manual code updates. The threat landscape was evolving faster than the rules could be written.

Coverage gaps. Heuristic controls fundamentally cannot detect context-dependent threats like multi-turn prompt injection or cascading hallucinations.

Scalability. As the number of agentic use cases grew, maintaining per-agent custom code became untenable. The security team needed controls that could be defined once and deployed across all agents simultaneously.

This is when the conversation shifted from "build custom controls" to "adopt a platform that can enforce OWASP controls natively."

The Enterprise Governance Model: Security Teams Own the Controls

This is where most conversations about agentic AI security go wrong.

There's a common assumption in the AI industry: the developers building the agents should set the security controls. Enterprises categorically reject this model. In every major FSI engagement, the message is consistent: security teams own the controls. The reasoning is straightforward. Developers optimize for functionality. Asking them to define security policies as well creates conflicts of interest. Security expertise is centralized for a reason: consistency, auditability, and accountability require a single team with enterprise-wide visibility. Regulatory compliance demands governance. Auditors expect one coherent security story, and 50 different teams making 50 different security decisions is the opposite of that. CISOs need to sign off, and that requires enterprise-wide visibility into the organization's risk posture.

What enterprises actually want is a model where the AI engineer building an app simply calls into the central platform, builds their use case, and the controls are already there. The controls are already there. Whether to use regex or an SLM is a decision the security team has already made. The security team has already made those decisions, and the platform enforces them automatically.

Critically, this extends beyond security teams:

"Both technical and non-technical teams must be able to participate in the evaluation workflow."

— Platform engineering lead, major SaaS company

Product managers, compliance officers, and risk analysts need to define policies, review agent behavior, and audit traces without filing engineering tickets. Policy configuration must be UI-driven, not code-driven. If your governance tooling requires a Python script to update a policy, you have already lost the non-technical stakeholders who are ultimately accountable for compliance.

How It Works: The Central Control Plane

Phase 1: Build. AI engineers build agentic use cases: client preparation tools, internal copilots, consumer-facing assistants. Security controls are handled entirely by the central team. All use cases deploy on a centralized platform.

Phase 2: Audit. The central security team audits every use case against all OWASP ASI01–ASI10 categories and the 17-threat model. Specific attack scenarios are identified, risk-scored, and documented.

Phase 3: Define Controls. Based on the audit, the security team defines policies on the Agent Control server. These are declarative rules that specify scope (which tool, which stage), condition (what to match), and action (deny, warn, or log). This is a non-negotiable, top-down process.

Phase 4: Implement and Monitor. Controls are centrally managed. Galileo's 31 pre-built metrics, spanning prompt injection detection, PII/CPNI scanning, context adherence, tool selection quality, agent efficiency, and more, score every trace in real time. The agent graph visualizes the full agentic trace: every tool call, its parameters, its output, and how it chains into subsequent steps. No agentic use case ships until the security team confirms the remediation plan is in place.

The Uncomfortable Truth

Every enterprise we speak with is stalling on taking agentic use cases to production. The reason is the same everywhere: they lack observability and monitoring they can trust.

The agents are built. The use cases are validated. The business value is clear.

But the security team still needs to prove OWASP controls are enforced comprehensively, trace every agent interaction end-to-end, demonstrate to regulators that PII is protected at every step, and guarantee that tool-use policies are enforced in practice, beyond documentation.

The gap between "we have an agentic AI platform" and "we have a secure agentic AI platform" is what's keeping hundreds of use cases from reaching production. The enterprises that close this gap first will have an insurmountable advantage: in AI capability, in trust, and in the regulatory standing required to deploy AI at scale in regulated industries.

What Comes Next

The OWASP Top 10 for Agentic AI is the foundation. Operationalizing it requires a central control plane where security teams define and enforce policies across every agent, layered defenses combining heuristic controls and AI-powered guardrails calibrated to the full OWASP threat taxonomy, full-coverage observability with production-speed tracing on every interaction, and the ability to extend the same infrastructure to codify GDPR, EU AI Act, and whatever comes next.

The enterprises that treat OWASP as a checkbox will fail. Those who treat it as the architectural blueprint for agentic AI governance will lead. Learn more in our whitepaper.

Explore Agent Control (GitHub, Apache 2.0) | Galileo Metrics | Runtime Protection | Eval Engineering Book

Based on the OWASP Top 10 for Agentic Applications (Version 2026, December 2025) by the OWASP Gen AI Security Project, Agentic Security Initiative.

Frequently asked questions

What is the OWASP Top 10 for Agentic Applications?

Published by the OWASP Gen AI Security Project in December 2025, the Top 10 for Agentic Applications defines ten risk categories specific to AI agents, identified as ASI01 through ASI10. It covers threats like goal hijack, tool misuse, identity abuse, memory poisoning, cascading failures, and rogue agents. CISOs and security architects now use it as the standard framework for evaluating whether agentic systems are production-ready.

What is a central control plane for AI agents?

A central control plane is the architectural layer where security teams define and enforce policies across every agent in production, regardless of which framework or model each agent uses. Policies live on a server, not in agent code, so a single rule update propagates to your entire fleet within seconds. This pattern separates governance from development, which is what enterprise CISOs and auditors require.

Why do enterprises treat agent-level controls as separate from LLM-level controls?

A static LLM that gets prompt-injected produces one bad response. An agent that gets hijacked chains tool calls, queries databases, and delegates to other agents, all autonomously executing the attacker's objective. The blast radius is orders of magnitude larger. Multiple banks now run separate procurement processes for agent controls, with distinct requirements documents covering prompt monitoring, blocking, and policy configuration that LLM-level tooling does not address.

How do security teams enforce OWASP controls across hundreds of AI agents at once?

They centralize policy definition on a control server and enforce it through lightweight hooks in agent code. Instead of hardcoding regex and rule logic into each agent, which breaks every time a threat evolves, one policy update propagates to every connected agent in seconds. Compliance and risk teams can adjust rules through a UI without filing engineering tickets, which is what scaling agentic AI in regulated industries requires.

How does Galileo support OWASP-aligned governance for agentic AI in regulated industries?

Galileo pairs Agent Control, an open-source policy server that enforces centralized rules across every connected agent, with the Galileo platform's pre-built metrics and Runtime Protection. Luna-2 small language models score every input and tool output for prompt injection, PII leakage, and context adherence in under 200ms. Together, this gives security teams in financial services and healthcare an auditable trail mapped to OWASP ASI01 through ASI10.

As agentic AI moves from prototype to production, the biggest question has shifted. Every enterprise already knows it needs security controls. The real question is: who owns them, how are they enforced, and can they scale across hundreds of use cases simultaneously?

The Agentic AI Security Paradox

Every major financial institution is building agentic AI. Client preparation tools, autonomous copilots, consumer-facing assistants. The use cases are multiplying fast. But there's a paradox: the more agentic use cases an enterprise builds, the harder it becomes to secure them.

Traditional application security breaks down in the agentic world because agents make autonomous decisions, chain together tool calls, interact with databases, and process sensitive information, often across multiple steps where a single vulnerability can cascade into a full-blown breach.

This is where OWASP comes in. The OWASP Top 10 for Agentic Applications, alongside its companion 17-threat model, has quickly become the de facto security framework that enterprises are rallying around. But having a framework is one thing. Operationalizing it at enterprise scale is an entirely different challenge.

Notably, enterprises are increasingly treating agent-level controls as a distinct governance category, separate from LLM-level governance:

"Agentic AI requires separate control mechanisms, distinct from LLM-level controls."

— CISO office, global bank

Multiple enterprise procurement processes now explicitly name "agent control" as a standalone purchase category, distinct from observability or developer tooling.

This blog breaks down how large enterprises, particularly in financial services, are turning OWASP from a PDF document into enforceable, auditable, centrally governed controls for agentic AI.

Why OWASP? Why Now?

Multiple regulatory pressures are converging simultaneously. EU AI Act audits begin in August 2026, requiring demonstrable compliance. GDPR continues to impose strict data-handling requirements on any AI system that processes personal data. Internal governance mandates at large banks require security sign-off before any agentic use case is deployed to production. This is already happening:

One global bank's CISO team cited EU AI Act compliance as the explicit driver for a formal requirements document covering prompt monitoring, prompt blocking, real-time reporting, and policy configuration for their agentic AI systems.

OWASP has emerged as the preferred starting point because it is the most concrete and repeatable. While the EU AI Act is broad and has undergone countless revisions, OWASP provides specific threat scenarios, attack vectors, and remediation guidance that security teams can directly map to their agentic platforms.

OWASP is just the starting point, with much more to build on top. Enterprises are already asking: "If we can codify OWASP into enforceable controls, can we do the same for GDPR? For the EU AI Act?" The answer is yes, and the architecture required to do it is the same central control plane described later in this post.

Whitepaper: https://galileo.ai/owasp-whitepaper

The Threats Are Real, and They're Not What You Think

When most people think about AI security threats, they imagine external hackers crafting adversarial prompts. The reality inside large enterprises is very different.

Consider a bank with 500,000 employees. The primary concern is the insider threat. It's remarkably easy for someone to pass enough background checks to gain network access, and once inside, agentic AI systems become powerful tools for unauthorized data access, privilege escalation, and information extraction.

The OWASP Top 10 for Agentic Applications captures this reality across its threat categories:

Threat | Enterprise Concern | Why It Matters at Scale |

ASI01 Agent Goal Hijack | Prompt injection overrides the agent's intended objective | A single hijacked agent can expose data across the entire platform |

ASI02 Tool Misuse and Exploitation | Agents invoking tools outside the intended scope | Autonomous tool calls trigger unintended downstream actions |

ASI03 Identity and Privilege Abuse | Employees accessing others' sensitive data | Internal bad actors exploit agent capabilities at scale |

ASI04 Agentic Supply Chain | Compromised MCP servers, poisoned tool definitions | A single malicious plugin propagates across every agent that uses it |

ASI05 Unexpected Code Execution | Agents generating and running arbitrary code | One injected code block can compromise the host environment |

ASI06 Memory and Context Poisoning | Poisoned documents altering agent behavior | Corrupted retrieval sources produce dangerous outputs across sessions |

ASI07 Insecure Inter-Agent Communication | Forged or manipulated agent-to-agent messages | Multi-agent architectures amplify a single compromise into a coordinated attack |

ASI08 Cascading Failures | Agent-to-agent misinformation propagation | Consumer-facing agents (e.g., financial advisors) carry extreme reputational risk |

ASI09 Human-Agent Trust Exploitation | Users over-trusting agent outputs without verification | Decisions based on unverified agent outputs create liability exposure |

ASI10 Rogue Agents | Agents operating outside their intended boundaries | Unmonitored agents accumulate drift until a breach surfaces the problem |

The key insight: prompt injection alone has numerous flavors. Zero-shot attacks, indirect injection via retrieved documents, multi-turn manipulation, cross-agent injection, and more. A single "prompt injection guardrail" is insufficient. Enterprises need coverage mapped to the full OWASP threat taxonomy.

From the Field: How a Top-4 U.S. Bank Is Operationalizing OWASP

To understand how this plays out in practice, consider the journey of one of the largest financial institutions in the United States: a bank with over 500,000 employees, dozens of agentic AI use cases in development, and a dedicated security organization tasked with ensuring none of them go to production without ironclad controls.

The Starting Point: A Centralized Agentic Platform

The bank built a centralized agentic AI platform, an orchestration layer through which every agentic use case must be deployed. Whether it's a client preparation agent for wealth advisors, an internal copilot for operations, or a consumer-facing assistant in their retail banking app, every agent flows through this single platform.

The platform owner's mandate was clear: security controls should be centrally defined, centrally enforced, and invisible to the application developer. Application teams focus on building; the platform handles the rest.

The Security Audit: Mapping OWASP to Real Use Cases

The bank's central security team began auditing every agentic use case against the OWASP Top 10 for Agents. For each use case, they created detailed scenario maps: scenario IDs tied to specific ASI threat categories, attack narratives describing how each threat could manifest, risk scores based on likelihood and business impact, and required controls with specific implementation guidance.

For example, their agentic client preparation tool (used by wealth advisors to prepare for client meetings) was mapped against threats like: "What if an insider uses prompt injection to extract another advisor's client portfolio data?" "What if the agent hallucinates financial performance data that gets presented to a client?" "What if the agent's tool calls inadvertently modify CRM records?"

Each scenario was documented, scored, and assigned a remediation plan.

The Three Threats That Dominated Every Conversation

While all 10 OWASP categories matter, three consistently rose to the top of every risk assessment.

1. Prompt Injection: The Hydra-Headed Threat

Prompt injection was the single most discussed threat in every security review. What made it uniquely challenging was the sheer number of variants the bank had to account for: direct injection (users crafting explicit override instructions), indirect injection via retrieved context (poisoned documents in the knowledge base), zero-shot attacks (novel patterns exploiting instruction-following behavior), multi-turn manipulation (slow conversational steering across exchanges), and cross-agent injection (one compromised agent passing malicious instructions to downstream agents through shared context).

The bank's security team found this especially alarming because their initial prompt injection guardrail covered only approximately 2 of 10 OWASP-defined injection scenarios. The training data behind their detection model was narrowly focused on direct injection patterns, leaving indirect, zero-shot, and multi-turn variants almost entirely undetected.

This created a dangerous false sense of security. The guardrail reported low injection rates, while entire attack categories were invisible.

The lesson: a prompt injection strategy requires far more than a single detector. Enterprises need coverage mapped to every OWASP-defined variant, with an explicit gap analysis and continuous expansion of training data. This is why Galileo's Prompt Injection metric is trained against the full OWASP injection taxonomy and evaluates both user inputs and retrieved content, catching indirect injection from poisoned documents that input-only detection misses.

The bank's remediation approach layers four levels of defense: input sanitization (heuristic pattern matching and format validation), SLM-based detection (AI guardrails trained on the full OWASP injection taxonomy, including zero-shot and indirect variants), output validation (response coherence checks, data leakage detection, behavioral drift monitoring), and multi-turn context analysis (conversation trajectory tracking, intent shift detection, cross-agent instruction propagation monitoring).

2. PII Leakage: The Silent Compliance Killer

For a bank handling millions of customers' financial data, PII leakage through agentic systems is an existential risk: both a security concern and a regulatory one.

The scenarios that worried the security team most: cross-client data exposure (one client's information surfacing during another client's session due to context window pollution), PII in agent reasoning chains (intermediate steps containing sensitive data that gets logged or cached, even when the final output is clean), and embedding leakage (PII in vector databases retrieved through semantic similarity rather than explicit queries, making traditional access controls ineffective).

The challenge is that traditional PII detection (regex for SSNs, credit card patterns) catches the obvious cases but misses contextual PII. A combination of name, account activity, and location constitutes sensitive information even if no single field triggers a pattern match.

Across the enterprises we work with, PII handling is a gating requirement.

One bank requires dual-value storage: payloads submitted with both a redacted version (for display and logging) and an unredacted version (for processing), with TTL-based deletion so that regulated data is purged within hours.

— Global bank, CISO requirements

A major platform company found that entire production sessions had to be redacted before they could even be used for evaluation, because authentication and PII data were woven throughout.

— Major SaaS platform, trust and control team

A cybersecurity team at another financial institution framed their entire engagement around DLP and PII guardrails, with the security team (not the AI team) driving every requirement.

— Top-10 U.S. bank, cybersecurity team

This is where Galileo's PII/CPNI metric goes beyond regex-based detection, scanning tool outputs for sensitive data patterns before they leave the agent. For contextual PII that pre-built metrics miss, Galileo's Eval Engineering methodology lets compliance teams build custom evaluators trained on their institution's specific data patterns, closing the gap between generic detection and what your specific institution actually needs to flag.

"We don't need to prove that PII doesn't leak 99% of the time. We need to prove it doesn't leak, period."

— Security team, top-4 U.S. bank

3. Tool Misuse: The Underestimated Agent Risk

Tool misuse (ASI02) is perhaps the most uniquely agentic threat in the OWASP taxonomy, and the one that traditional security teams are least prepared for.

In agentic systems, agents go far beyond generating text. They take actions. They call APIs, query databases, trigger workflows, and modify records. Each tool call is a potential attack surface.

The scenarios that emerged during the bank's audit: scope creep (an agent authorized to read from a database using write capabilities because permissions weren't sufficiently granular), tool chaining exploitation (individually benign tool calls chained to achieve a harmful outcome, for example "lookup contact" + "send email" = data exfiltration), and unintended downstream effects (a single tool call to update a client's risk profile triggering automated portfolio rebalancing).

The critical engineering challenge: tool-use policies must be defined at the action level, far more granular than the agent level. Saying "this agent can use the CRM tool" leaves too much open. The policy must specify which operations (read, write, delete), on which data (own clients only), under which conditions (only during an active client session).

Tool | Operation | Scope | Conditions |

CRM API | READ | Own clients | Active session only |

CRM API | WRITE | BLOCKED | Requires human approval |

SEND | Internal only | Pre-approved templates only | |

Database | QUERY | Filtered views | No cross-client joins |

Database | MODIFY | BLOCKED | All writes require human-in-the-loop |

This is exactly what Agent Control was built for. Instead of embedding these policies in each agent's code, they're defined declaratively on a centralized server and enforced at every tool call boundary:

# Agent Control: block unauthorized CRM writes, enforced across ALL agents { "name": "block-crm-writes", "definition": { "enabled": True, "execution": "server", "scope": { "step_types": ["tool"], "step_names": ["crm_update", "crm_delete"], "stages": ["pre"], }, "condition": { "selector": {"path": "*"}, "evaluator": { "name": "regex", "config": {"pattern": r".*"}, }, }, "action": {"decision": "deny"}, } }

One policy definition. Every agent enforces it within seconds. No redeployment, no code changes, no agent-side logic. The security team updates policies on the server; every connected agent, whether it runs on ADK, LangGraph, CrewAI, or a custom stack, picks them up immediately.

This matters because policies must keep pace with a rapidly evolving threat landscape:

"Risk understanding evolves over time. Policies must be updatable without code redeployment."

— CTO office, global bank

When a new threat vector surfaces or a regulator issues updated guidance, the security team pushes an updated policy, and every agent in production enforces it within seconds. No sprint planning, no release cycle, no coordination across application teams. (GitHub, Apache 2.0)

Why Custom Heuristic Code Wasn't Enough

Initially, the bank partnered with a major consulting firm to build custom heuristic code for OWASP controls: regex patterns, keyword filters, and rule-based access controls. This approach hit three walls:

Maintenance burden. Every new threat variant required manual code updates. The threat landscape was evolving faster than the rules could be written.

Coverage gaps. Heuristic controls fundamentally cannot detect context-dependent threats like multi-turn prompt injection or cascading hallucinations.

Scalability. As the number of agentic use cases grew, maintaining per-agent custom code became untenable. The security team needed controls that could be defined once and deployed across all agents simultaneously.

This is when the conversation shifted from "build custom controls" to "adopt a platform that can enforce OWASP controls natively."

The Enterprise Governance Model: Security Teams Own the Controls

This is where most conversations about agentic AI security go wrong.

There's a common assumption in the AI industry: the developers building the agents should set the security controls. Enterprises categorically reject this model. In every major FSI engagement, the message is consistent: security teams own the controls. The reasoning is straightforward. Developers optimize for functionality. Asking them to define security policies as well creates conflicts of interest. Security expertise is centralized for a reason: consistency, auditability, and accountability require a single team with enterprise-wide visibility. Regulatory compliance demands governance. Auditors expect one coherent security story, and 50 different teams making 50 different security decisions is the opposite of that. CISOs need to sign off, and that requires enterprise-wide visibility into the organization's risk posture.

What enterprises actually want is a model where the AI engineer building an app simply calls into the central platform, builds their use case, and the controls are already there. The controls are already there. Whether to use regex or an SLM is a decision the security team has already made. The security team has already made those decisions, and the platform enforces them automatically.

Critically, this extends beyond security teams:

"Both technical and non-technical teams must be able to participate in the evaluation workflow."

— Platform engineering lead, major SaaS company

Product managers, compliance officers, and risk analysts need to define policies, review agent behavior, and audit traces without filing engineering tickets. Policy configuration must be UI-driven, not code-driven. If your governance tooling requires a Python script to update a policy, you have already lost the non-technical stakeholders who are ultimately accountable for compliance.

How It Works: The Central Control Plane

Phase 1: Build. AI engineers build agentic use cases: client preparation tools, internal copilots, consumer-facing assistants. Security controls are handled entirely by the central team. All use cases deploy on a centralized platform.

Phase 2: Audit. The central security team audits every use case against all OWASP ASI01–ASI10 categories and the 17-threat model. Specific attack scenarios are identified, risk-scored, and documented.

Phase 3: Define Controls. Based on the audit, the security team defines policies on the Agent Control server. These are declarative rules that specify scope (which tool, which stage), condition (what to match), and action (deny, warn, or log). This is a non-negotiable, top-down process.

Phase 4: Implement and Monitor. Controls are centrally managed. Galileo's 31 pre-built metrics, spanning prompt injection detection, PII/CPNI scanning, context adherence, tool selection quality, agent efficiency, and more, score every trace in real time. The agent graph visualizes the full agentic trace: every tool call, its parameters, its output, and how it chains into subsequent steps. No agentic use case ships until the security team confirms the remediation plan is in place.

The Uncomfortable Truth

Every enterprise we speak with is stalling on taking agentic use cases to production. The reason is the same everywhere: they lack observability and monitoring they can trust.

The agents are built. The use cases are validated. The business value is clear.

But the security team still needs to prove OWASP controls are enforced comprehensively, trace every agent interaction end-to-end, demonstrate to regulators that PII is protected at every step, and guarantee that tool-use policies are enforced in practice, beyond documentation.

The gap between "we have an agentic AI platform" and "we have a secure agentic AI platform" is what's keeping hundreds of use cases from reaching production. The enterprises that close this gap first will have an insurmountable advantage: in AI capability, in trust, and in the regulatory standing required to deploy AI at scale in regulated industries.

What Comes Next

The OWASP Top 10 for Agentic AI is the foundation. Operationalizing it requires a central control plane where security teams define and enforce policies across every agent, layered defenses combining heuristic controls and AI-powered guardrails calibrated to the full OWASP threat taxonomy, full-coverage observability with production-speed tracing on every interaction, and the ability to extend the same infrastructure to codify GDPR, EU AI Act, and whatever comes next.

The enterprises that treat OWASP as a checkbox will fail. Those who treat it as the architectural blueprint for agentic AI governance will lead. Learn more in our whitepaper.

Explore Agent Control (GitHub, Apache 2.0) | Galileo Metrics | Runtime Protection | Eval Engineering Book

Based on the OWASP Top 10 for Agentic Applications (Version 2026, December 2025) by the OWASP Gen AI Security Project, Agentic Security Initiative.

Frequently asked questions

What is the OWASP Top 10 for Agentic Applications?

Published by the OWASP Gen AI Security Project in December 2025, the Top 10 for Agentic Applications defines ten risk categories specific to AI agents, identified as ASI01 through ASI10. It covers threats like goal hijack, tool misuse, identity abuse, memory poisoning, cascading failures, and rogue agents. CISOs and security architects now use it as the standard framework for evaluating whether agentic systems are production-ready.

What is a central control plane for AI agents?

A central control plane is the architectural layer where security teams define and enforce policies across every agent in production, regardless of which framework or model each agent uses. Policies live on a server, not in agent code, so a single rule update propagates to your entire fleet within seconds. This pattern separates governance from development, which is what enterprise CISOs and auditors require.

Why do enterprises treat agent-level controls as separate from LLM-level controls?

A static LLM that gets prompt-injected produces one bad response. An agent that gets hijacked chains tool calls, queries databases, and delegates to other agents, all autonomously executing the attacker's objective. The blast radius is orders of magnitude larger. Multiple banks now run separate procurement processes for agent controls, with distinct requirements documents covering prompt monitoring, blocking, and policy configuration that LLM-level tooling does not address.

How do security teams enforce OWASP controls across hundreds of AI agents at once?

They centralize policy definition on a control server and enforce it through lightweight hooks in agent code. Instead of hardcoding regex and rule logic into each agent, which breaks every time a threat evolves, one policy update propagates to every connected agent in seconds. Compliance and risk teams can adjust rules through a UI without filing engineering tickets, which is what scaling agentic AI in regulated industries requires.

How does Galileo support OWASP-aligned governance for agentic AI in regulated industries?

Galileo pairs Agent Control, an open-source policy server that enforces centralized rules across every connected agent, with the Galileo platform's pre-built metrics and Runtime Protection. Luna-2 small language models score every input and tool output for prompt injection, PII leakage, and context adherence in under 200ms. Together, this gives security teams in financial services and healthcare an auditable trail mapped to OWASP ASI01 through ASI10.

As agentic AI moves from prototype to production, the biggest question has shifted. Every enterprise already knows it needs security controls. The real question is: who owns them, how are they enforced, and can they scale across hundreds of use cases simultaneously?

The Agentic AI Security Paradox

Every major financial institution is building agentic AI. Client preparation tools, autonomous copilots, consumer-facing assistants. The use cases are multiplying fast. But there's a paradox: the more agentic use cases an enterprise builds, the harder it becomes to secure them.

Traditional application security breaks down in the agentic world because agents make autonomous decisions, chain together tool calls, interact with databases, and process sensitive information, often across multiple steps where a single vulnerability can cascade into a full-blown breach.

This is where OWASP comes in. The OWASP Top 10 for Agentic Applications, alongside its companion 17-threat model, has quickly become the de facto security framework that enterprises are rallying around. But having a framework is one thing. Operationalizing it at enterprise scale is an entirely different challenge.

Notably, enterprises are increasingly treating agent-level controls as a distinct governance category, separate from LLM-level governance:

"Agentic AI requires separate control mechanisms, distinct from LLM-level controls."

— CISO office, global bank

Multiple enterprise procurement processes now explicitly name "agent control" as a standalone purchase category, distinct from observability or developer tooling.

This blog breaks down how large enterprises, particularly in financial services, are turning OWASP from a PDF document into enforceable, auditable, centrally governed controls for agentic AI.

Why OWASP? Why Now?

Multiple regulatory pressures are converging simultaneously. EU AI Act audits begin in August 2026, requiring demonstrable compliance. GDPR continues to impose strict data-handling requirements on any AI system that processes personal data. Internal governance mandates at large banks require security sign-off before any agentic use case is deployed to production. This is already happening:

One global bank's CISO team cited EU AI Act compliance as the explicit driver for a formal requirements document covering prompt monitoring, prompt blocking, real-time reporting, and policy configuration for their agentic AI systems.

OWASP has emerged as the preferred starting point because it is the most concrete and repeatable. While the EU AI Act is broad and has undergone countless revisions, OWASP provides specific threat scenarios, attack vectors, and remediation guidance that security teams can directly map to their agentic platforms.

OWASP is just the starting point, with much more to build on top. Enterprises are already asking: "If we can codify OWASP into enforceable controls, can we do the same for GDPR? For the EU AI Act?" The answer is yes, and the architecture required to do it is the same central control plane described later in this post.

Whitepaper: https://galileo.ai/owasp-whitepaper

The Threats Are Real, and They're Not What You Think

When most people think about AI security threats, they imagine external hackers crafting adversarial prompts. The reality inside large enterprises is very different.

Consider a bank with 500,000 employees. The primary concern is the insider threat. It's remarkably easy for someone to pass enough background checks to gain network access, and once inside, agentic AI systems become powerful tools for unauthorized data access, privilege escalation, and information extraction.

The OWASP Top 10 for Agentic Applications captures this reality across its threat categories:

Threat | Enterprise Concern | Why It Matters at Scale |

ASI01 Agent Goal Hijack | Prompt injection overrides the agent's intended objective | A single hijacked agent can expose data across the entire platform |

ASI02 Tool Misuse and Exploitation | Agents invoking tools outside the intended scope | Autonomous tool calls trigger unintended downstream actions |

ASI03 Identity and Privilege Abuse | Employees accessing others' sensitive data | Internal bad actors exploit agent capabilities at scale |

ASI04 Agentic Supply Chain | Compromised MCP servers, poisoned tool definitions | A single malicious plugin propagates across every agent that uses it |

ASI05 Unexpected Code Execution | Agents generating and running arbitrary code | One injected code block can compromise the host environment |

ASI06 Memory and Context Poisoning | Poisoned documents altering agent behavior | Corrupted retrieval sources produce dangerous outputs across sessions |

ASI07 Insecure Inter-Agent Communication | Forged or manipulated agent-to-agent messages | Multi-agent architectures amplify a single compromise into a coordinated attack |

ASI08 Cascading Failures | Agent-to-agent misinformation propagation | Consumer-facing agents (e.g., financial advisors) carry extreme reputational risk |

ASI09 Human-Agent Trust Exploitation | Users over-trusting agent outputs without verification | Decisions based on unverified agent outputs create liability exposure |

ASI10 Rogue Agents | Agents operating outside their intended boundaries | Unmonitored agents accumulate drift until a breach surfaces the problem |

The key insight: prompt injection alone has numerous flavors. Zero-shot attacks, indirect injection via retrieved documents, multi-turn manipulation, cross-agent injection, and more. A single "prompt injection guardrail" is insufficient. Enterprises need coverage mapped to the full OWASP threat taxonomy.

From the Field: How a Top-4 U.S. Bank Is Operationalizing OWASP

To understand how this plays out in practice, consider the journey of one of the largest financial institutions in the United States: a bank with over 500,000 employees, dozens of agentic AI use cases in development, and a dedicated security organization tasked with ensuring none of them go to production without ironclad controls.

The Starting Point: A Centralized Agentic Platform

The bank built a centralized agentic AI platform, an orchestration layer through which every agentic use case must be deployed. Whether it's a client preparation agent for wealth advisors, an internal copilot for operations, or a consumer-facing assistant in their retail banking app, every agent flows through this single platform.

The platform owner's mandate was clear: security controls should be centrally defined, centrally enforced, and invisible to the application developer. Application teams focus on building; the platform handles the rest.

The Security Audit: Mapping OWASP to Real Use Cases